Difference between revisions of "User:Noemiino/year4"

| Line 39: | Line 39: | ||

::"''Emotions modulate our touches on force-sensitive screens, and humans have a natural ability to recognize other people’s emotions by watching prerecorded videos of their expressive touches. Machines can learn the same emotion recognition ability and do better than humans if they are allowed to continue learning on new data. It is possible to enable force-sensitive screens to recognize users’ emotions and share this emotional insight with users, increasing users’ emotional awareness and allowing researchers to design better technologies for well-being."'' | ::"''Emotions modulate our touches on force-sensitive screens, and humans have a natural ability to recognize other people’s emotions by watching prerecorded videos of their expressive touches. Machines can learn the same emotion recognition ability and do better than humans if they are allowed to continue learning on new data. It is possible to enable force-sensitive screens to recognize users’ emotions and share this emotional insight with users, increasing users’ emotional awareness and allowing researchers to design better technologies for well-being."'' | ||

<br> | <br> | ||

| − | We looked at current artificial intelligence models trained on senses and we recognised the pattern that Alicia also mentioned: there is not enough focus on touch. Most of emotional processing focuses on facial expressions through computer vision. There is an interesting distinction about how private we are about somebody touching our faces but has become a public domain through security cameras and shared pictures. | + | We looked at current artificial intelligence models trained on senses and we recognised the pattern that Alicia also mentioned: there is not enough focus on touch. Most of emotional processing focuses on facial expressions through computer vision. There is an interesting distinction about how private we are about somebody touching our faces but the same body part has become a public domain through security cameras and shared pictures. |

</span> | </span> | ||

=Project 3= | =Project 3= | ||

=Project 4= | =Project 4= | ||

Revision as of 13:43, 27 September 2018

Contents

Info

NOEMI BIRO

0919394@hr.nl

Graphic Design

Introduction

As a graphic designer I am trained to look at small visual details and make adjustments to them. I am interested in these details not just from a human perspective but how our new technologies enable us new ways of exploration. I want to include technology in my work as much as possible in form of interactions, new layers or as research experiments.

I look at digital craft and see the opportunity to work with my combined interests: analog + digital in one hybrid and that excited me. I am curious about how technology enables machines to recognize, think, design (?) and how humans can create conditions for these interactions to happen. In my opinion when technology is used interaction is already created, left for the designer is to make sure the conditions are the framework in which they happen.

Project 1_Critical Making exercise

Reimagine an existing technology or platform using the provided sets of cards.

Project 2 _ Cybernetic Prosthetics

In small groups, you will present a cluster of self-directed works as a prototype of a new relationships between a biological organism- and a machine, relating to our explorations on reimagining technology in the posthuman age. The prototypes should be materialized in 3D form, and simulate interactive feedback loops that generate emergent forms.

Group project realised by Sjoerd Legue, Tom, Emiel Gilijamse and Noemi Biro

INSPIRATION

We started this project with a big brainstorm around different human senses. Looking at researches and recent publications, this article from the guardian << No hugging: are we living through a crisis of touch? >> raised the question of touching in our current state of society. What we found intriguing about this sense is how it is becoming more and more repressed to the technological interfaces of our daily life. It is becoming a taboo to touch a stranger but it is considered normal to walk around the streets holding our idle phone. Institutions are also putting regulations on what is considered appropriate contact between professionals and patients. For example if a reaction to a bad news used to be a hug, nowadays it is more likely to pat somebody on the shoulders than to have such a large area connection with each other.

Based on the above mentioned article we started to search for more scientific research and projects around touch and technological surfaces to gain an insight into how we treat our closest gadgets.

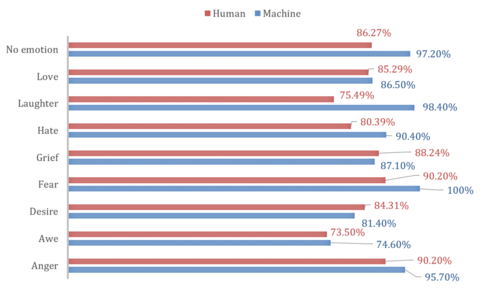

This recent article from Forbes magazine relates the research of Alicia Heraz from the Brain Mining Lab in Montreal << This AI Can Recognize Anger, Awe, Desire, Fear, Hate, Grief, Love ... By How You Touch Your Phone >> who trained an algorithm to recognize humans emotional pattern from the way we touch our phone. In her official research document << Recognition of Emotions Conveyed by Touch Through Force-Sensitive Screens >> Alicia reaches the conclusion:

- "Emotions modulate our touches on force-sensitive screens, and humans have a natural ability to recognize other people’s emotions by watching prerecorded videos of their expressive touches. Machines can learn the same emotion recognition ability and do better than humans if they are allowed to continue learning on new data. It is possible to enable force-sensitive screens to recognize users’ emotions and share this emotional insight with users, increasing users’ emotional awareness and allowing researchers to design better technologies for well-being."

We looked at current artificial intelligence models trained on senses and we recognised the pattern that Alicia also mentioned: there is not enough focus on touch. Most of emotional processing focuses on facial expressions through computer vision. There is an interesting distinction about how private we are about somebody touching our faces but the same body part has become a public domain through security cameras and shared pictures.