Difference between revisions of "User:Sjoerdlegue"

Sjoerdlegue (talk | contribs) |

Sjoerdlegue (talk | contribs) |

||

| (30 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

= '''Cybernetics: Working with self-organizing systems''' = | = '''Cybernetics: Working with self-organizing systems''' = | ||

==Critical Making== | ==Critical Making== | ||

| + | Reimagine an existing technology or platform using the provided sets of cards. In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'. | ||

| + | |||

| + | Group project realised by [[User: Sjoerdlegue | Sjoerd Legue ]], [[User: Tomschouw | Tom Schouw]], [[User: emielgilijamse | Emiel Gilijamse]], Manou, Iris and [[User: Noemiino | Noemi Biro]] | ||

| + | |||

===Treeincarnate=== | ===Treeincarnate=== | ||

---- | ---- | ||

| − | [[File:Cards02.jpg|180px|right|thumb|Exercise cards of the first lesson]]In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'. | + | [[File:Cards02.jpg|180px|right|thumb|Exercise cards of the first lesson]]In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'. |

====Research==== | ====Research==== | ||

| − | We used this random picked cards to setup our project and brainstorm about the potential theme. Almost immediately several idea's popped up in our mind, the one becoming the base of our concept being the writings of ''Peter Wohlleben'', a German forrester who is interested in the scientific side of trees communication with eachother. The book he wrote, ''The Hidden Life of Trees'' became our main source of information. Basically what Wohlleben is researching is the connection between trees to trade nutrions and minerals in order for their fellow trees, mostly family, to survive. For example the fact that older, bigger trees send down nutrions to the smaller trees closer to the surface which have less ability to generate energy through photosyntisis. | + | We used this random picked cards to setup our project and brainstorm about the potential theme. Almost immediately several idea's popped up in our mind, the one becoming the base of our concept being the writings of ''Peter Wohlleben'', a German forrester who is interested in the scientific side of trees communication with eachother. The book he wrote, ''The Hidden Life of Trees'' became our main source of information. Basically what Wohlleben is researching is the connection between trees to trade nutrions and minerals in order for their fellow trees, mostly family, to survive. For example the fact that older, bigger trees send down nutrions to the smaller trees closer to the surface which have less ability to generate energy through photosyntisis. |

====Examples==== | ====Examples==== | ||

| Line 37: | Line 41: | ||

At first we wanted to give the tree a voice with giving it the ability to post likes using its youtube account. Where in- and output would take place in the same root network. But is a tree able to receive information a we can? And if so, what will it do with it? We wanted to stay in touch with the scientific evidence of the talking trees and decided to focus on an other application within the field of human-tree communication. | At first we wanted to give the tree a voice with giving it the ability to post likes using its youtube account. Where in- and output would take place in the same root network. But is a tree able to receive information a we can? And if so, what will it do with it? We wanted to stay in touch with the scientific evidence of the talking trees and decided to focus on an other application within the field of human-tree communication. | ||

| − | An other desire of our team was the fact that we wanted to present a consumer product taking the role as a company trying to sell our product for the global market. After researching other products concerning plants and trees, we found the biodegradable Bios urn. An urn which use as a pot to plant a tree of plant, which can later can be buried in the ground somewhere. This product inspired us to use this example as our physical part of the project. So we wanted to construct a smart vase which had the technical ability to sense the chemical secretion from the roots and convert these to a positive or negative output. The input would also take place using the build-in sensors in the vase using a wireless internet connection. | + | An other desire of our team was the fact that we wanted to present a consumer product taking the role as a company trying to sell our product for the global market. After researching other products concerning plants and trees, we found the biodegradable Bios urn. An urn which use as a pot to plant a tree of plant, which can later can be buried in the ground somewhere. This product inspired us to use this example as our physical part of the project. So we wanted to construct a smart vase which had the technical ability to sense the chemical secretion from the roots and convert these to a positive or negative output. The input would also take place using the build-in sensors in the vase using a wireless internet connection. |

| + | |||

| + | ====Documentation==== | ||

| + | ---- | ||

| + | <gallery> | ||

| + | File:WhatsApp_Image_2018-09-27_at_14.45.59_(2).jpeg | Visual script for promotional video's | ||

| + | File:WhatsApp_Image_2018-09-27_at_14.45.59_(1).jpeg | Prototype diagram for explaining inner workings | ||

| + | File:WhatsApp_Image_2018-09-27_at_14.45.59.jpeg | Order of Usage sheet | ||

| + | File:WhatsApp_Image_2018-09-27_at_14.46.00_(1).jpeg | Treeincarnate Application prototype sketches | ||

| + | </gallery> | ||

| + | |||

| + | <gallery> | ||

| + | File:WhatsApp_Image_2018-09-12_at_11.28.19.jpeg | Coating the prototype vase | ||

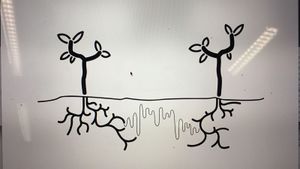

| + | File:WhatsApp_Image_2018-09-12_at_16.10.38_(1).jpeg | Diagram 'Talking to trees' | ||

| + | File:WhatsApp_Image_2018-09-12_at_16.10.38_(2).jpeg | Diagram 'Wood Wide Web' | ||

| + | </gallery> | ||

==Cybernetic Prosthetics== | ==Cybernetic Prosthetics== | ||

| − | + | ||

| + | ===MIDAS=== | ||

| + | ---- | ||

| + | |||

| + | ====Research==== | ||

| + | [[File:Research_alicia_1.png|thumb|320px|right|Alicia's Research we used as our inspiration]]We started this project with a big brainstorm around different human senses. Looking at researches and recent publications, this article from the guardian ([https://www.theguardian.com/society/2018/mar/07/crisis-touch-hugging-mental-health-strokes-cuddles No hugging: are we living through a crisis of touch?]) raised the question of touching in our current state of society. What we found intriguing about this sense is how it is becoming more and more repressed to the technological interfaces of our daily life. It is becoming a taboo to touch a stranger but it is considered normal to walk around the streets holding our idle phone. Institutions are also putting regulations on what is considered appropriate contact between professionals and patients. For example, if a reaction to a bad news used to be a hug, nowadays it is more likely to pat somebody on the shoulders than to have such a large area connection with each other. | ||

| + | |||

| + | Based on the above-mentioned article we started to search for more scientific research and projects around touch and technological surfaces to gain insight into how we treat our closest gadgets. | ||

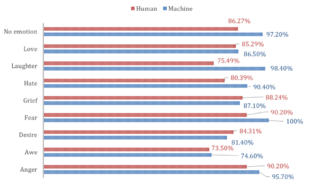

| + | This recent article from Forbes magazine relates the research of Alicia Heraz from the Brain Mining Lab in Montreal ([https://www.forbes.com/sites/johnkoetsier/2018/08/31/new-tech-could-help-siri-google-assistant-read-our-emotions-through-touch-screens/#53a12c872132 This AI Can Recognize Anger, Awe, Desire, Fear, Hate, Grief, Love ... By How You Touch Your Phone]) who trained an algorithm to recognize humans emotional pattern from the way we touch our phone. In her official research document ([https://mental.jmir.org/2018/3/e10104/ Recognition of Emotions Conveyed by Touch Through Force-Sensitive Screens]). | ||

| + | Alicia reaches the conclusion: | ||

| + | |||

| + | ::"''Emotions modulate our touches on force-sensitive screens, and humans have a natural ability to recognize other people’s emotions by watching prerecorded videos of their expressive touches. Machines can learn the same emotion recognition ability and do better than humans if they are allowed to continue learning on new data. It is possible to enable force-sensitive screens to recognize users’ emotions and share this emotional insight with users, increasing users’ emotional awareness and allowing researchers to design better technologies for well-being."'' | ||

| + | |||

| + | [[File:Research_alicia_2.png|thumb|320px|right]]We looked at current artificial intelligence models trained on senses and we recognized the pattern that Alicia also mentioned: there is not enough focus on touch. Most of the emotional processing focuses on facial expressions through computer vision. There is an interesting distinction about how private we are about somebody touching our faces but the same body part has become a public domain through security cameras and shared pictures. | ||

| + | |||

| + | With further research into the state of current artificial intelligence on the market and in our surroundings, this quote from the documentary ([https://www.npostart.nl/2doc/17-09-2018/VPWON_1245940 More Human than Human]) captured our attention | ||

| + | :: "'' We need to make it as human as possible "'' | ||

| + | |||

| + | Looking into the future of AI technology the documentary imagines a world where in order for human and machine to coexist they need to evolve together under the values of compassion and equality. We, humans, are receptive to our surroundings by touch thus we started to imagine how we could introduce AI into this circle to make the first step towards equality. Even though the project is about the extension of AI on an emotional level we recognized this attempt as a humanity-saving mission. Once AI is capable of autonomous thoughts and it can collect all the information from the internet our superiority as a species is being questioned and many specialists even argue that it will be overthrown. That is why it is essential to think of this new relationship in terms of equality and feed our empathetical information into the robots so they can function under the same ethical codes as we do. | ||

| + | |||

| + | ====Objective==== | ||

| + | From this premise, we first started to think of a first AID kit for robots from where they could learn about our gestures towards each other expressing different emotions. The best manifestation of this kit we saw as an ever-growing database which by traveling around the world could categorize not only the emotion deducted from the touch but also a cultural background linked to geographical location. | ||

| + | |||

| + | For the first prototype, our objective was to realize a working interface where we could make the process of gathering data feel natural and give real-time feedback to the contributor. | ||

| + | |||

| + | ====Material Study==== | ||

| + | ---- | ||

| + | [[File:Mmeory_foam_face.jpg|thumb|200px|right|Make-up training mannequin]]We decided to focus on the human head as a base for our data collection because, on one hand, it is an intimate surface for touch with an assumption for truthful connection, on the other hand, the nervous system of the face can be a base for the visual circuit reacting to the touch. The first idea was to buy a mannequin head and cast it ourselves from a softer more skin-like material that has a soft memory foam aspect. Searching on the internet and in stores for a base for the cast was already asking so much time from us that we decided on the alternative of searching for the mannequin head in the right material. We found such a head in the makeup industry, used for practicing makeup and eyelash extensions. | ||

| + | |||

| + | Once we had the head we could get on to experiment with the circuits to be used on the head not only as conductors of touch but also as the visual center points of the project. First based on the nervous system we divided the face into forehead and cheeks as different mapping sites. Then with a white paper, we looked at the curves and what the optimal shape on the face looks like folded out. From a rough outline of the shape, we worked toward a smooth outline and then we used offset to get concentric lines inside the shape. | ||

| + | |||

| + | <gallery> | ||

| + | File:Evolution_circuits_2.jpg | Evolution of our prototype facial nerves | ||

| + | File:Evolution_circuits_3.jpg | ||

| + | File:Evolution_circuits_nervous_2.jpg | All prototypes displayed in one frame | ||

| + | </gallery> | ||

| + | |||

| + | To get to the final circuit shapes we decided on the connection points with the crocodile clips to be on the end of the head and underneath the ears. With 5 touchpoints on the forehead and 3 on each side of the face, the design followed the original concentric sketch but added open endings in form of dots to the face. | ||

| + | |||

| + | Not only the design was challenging for the circuits but also the use of the material. For the first technical prototype in which we used a grid of 3x3 to test the capacitive sensor, we used copper tape. Although this would have been the best material to use in terms of conduciveness and instead sticking surface the price for copper sheets big enough for our designs exceeded our budget and using copper tape would have meant assembling the circuit from multiple parts. | ||

| + | |||

| + | The alternative material was gold ($$$$), aluminum ($) or graphite ($). Luckily Tom had two cans of graphite spray and we tried it on paper and it worked. We tested it with an LED - it is blinking by the way. We cut the designs out with a plotter from a matte white foil and then sprayed the designs with the graphite spray. After we read into how to make the graphite a more efficient conductor we tried the tip to rub the surface with a cloth or cotton buns. The result was a shiny metalic surface that added even more character to the visual of the mannequin head. | ||

| + | |||

| + | <gallery> | ||

| + | File:Evolution_circuits_5.jpg | ||

| + | File:Evolution_circuits_6.jpg | ||

| + | File:Evolution_circuits_7.jpg | ||

| + | File:Evolution_circuits_8.jpg | ||

| + | </gallery> | ||

| + | |||

| + | ====Technical Aspects==== | ||

| + | ---- | ||

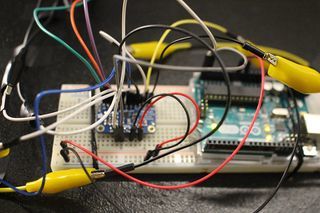

| + | [[File:MIDAS_electro_3.jpg|thumb|320px|right|Overview of prototype of the electronics]]For the technical working for the prototype, we used an Arduino Uno and the MPR121 Breakout to measure the capacitive values of the graphite strips. The Arduino was solely the interpreter of the sensor and the intermediate chip to talk to the visualisation we made for Processing. To make this work, we rebuilt the library that was provided on the Adafruit Github ([https://github.com/adafruit/Adafruit_MPR121 link to their Github]) to be able to calibrate the baseline-values ourselves and lock them. We also built-in a communicative system for Processing to understand. These serial messages could then be decoded by Processing and be displayed on the screen (as shown in the 'Exhibition' tab). | ||

| + | |||

| + | To give a proper look into the programs that we wrote, we want to publish our Arduino Capacitive Touch Interpreter and the auto-connecting Processing script to visualise the values the MPR121 sensor provides. | ||

| + | |||

| + | ====Files==== | ||

| + | <div style="padding: 5px 10px; font-family: Courier, monospace; background-color: #F3F3F3; border: 1px solid #C0C0C0;"> | ||

| + | [[Media:Rippleecho_for_cts.zip]] (bestandgrootte: 485Kb)<br/> | ||

| + | [[Media:Capacitive-touch-skin.ino.zip]] (bestandsgrootte: 3Kb) | ||

| + | </div><br/> | ||

| + | |||

| + | ===Exhibition=== | ||

| + | ---- | ||

| + | [[File:MIDAS_eXPO_1.jpg|thumb|420px|right]]It has been scientifically proven that humans have the ability to recognize and communicate emotions through an expressive touch. A new research proved that force-sensitive screens through machine learning are also able to recognize the users` emotions. From this starting point, we created Midas. Midas is designed to harvest a global database of human emotions expressed through touch. By giving up our unique emotional movement machines can gain emotional intelligence leading to an equal communicational platform. | ||

| + | |||

| + | Through adding touch as an emotional receptor to Artificial Intelligence we upload our unspoken ethical code into this new lifeform. This action is the starting point for a compassionate cohabitant. | ||

| + | |||

| + | <gallery> | ||

| + | File:MIDAS_eXPO_2.jpg | Set-up of the interactive part of the Exhibition | ||

| + | File:MIDAS_eXPO_3.jpg | Installing and preparing the last things | ||

| + | File:MIDAS_eXPO_4.png | Processing visualisations that react to the Arduino serial output | ||

| + | File:MIDAS_eXPO_4.jpg | | ||

| + | </gallery> | ||

= '''How to be Human''' = | = '''How to be Human''' = | ||

Latest revision as of 09:28, 3 October 2018

Cybernetics: Working with self-organizing systems

Critical Making

Reimagine an existing technology or platform using the provided sets of cards. In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'.

Group project realised by Sjoerd Legue , Tom Schouw, Emiel Gilijamse, Manou, Iris and Noemi Biro

Treeincarnate

In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'.

Research

We used this random picked cards to setup our project and brainstorm about the potential theme. Almost immediately several idea's popped up in our mind, the one becoming the base of our concept being the writings of Peter Wohlleben, a German forrester who is interested in the scientific side of trees communication with eachother. The book he wrote, The Hidden Life of Trees became our main source of information. Basically what Wohlleben is researching is the connection between trees to trade nutrions and minerals in order for their fellow trees, mostly family, to survive. For example the fact that older, bigger trees send down nutrions to the smaller trees closer to the surface which have less ability to generate energy through photosyntisis.

Examples

Using their root network to send and receive nutrians. But not completely by themselves, the communication system, also known as the 'Wood Wide Web', is a symbiosis of the trees root network and the mycelium networks that grow between the roots, connecting one tree to another. The mycelium network, also known as Mycorysol, is responsible for the succesfull communication between trees.

Other scientist are also working on this subject. Suzanne Simard from Canada is also researching the communicative networks in forests. She is mapping the communication taking place between natural forests. Proving the nurturing abilities of trees, working together to create a sustainable living environment. A network where the so called 'mother-trees' take extra care for their offspring but also other species, by sharing her nutricions for those in need.

Artist, scientists and designers are also intregued by this phenomenon. For example Barbara Mazzolai from the University in Pisa has had her work published in the book 'Biomimicry for Designers" by Veronika Kapsali. She developed a robot inspired by the communicative abilities of trees and mimicking their movements in the search for nutricions in the soil.

Bios Pot

The idea of presenting this project within a business model, introduced us the the company Bios. A Californian based company which produces biodegradable urn which can become a tree after planting the urn in the soil. We wanted to use this concept and embed this in our project. The promotional video could provide us with interesting video material for our own video. Besides the material, we were inspired by their application that was part of their product.

Concept

Using the research about the Wood Wide Web and the ability of trees to communicate with each other. We wanted to make the tree able to communicate with us. Using the same principle of sending different kinds of nutricion depending on what the tree wants to communicate, such as 'danger' or 'help me out'. We wanted to let the trees talk to us. Using a digital interface logged into the root network of the tree and communicate with each other.

At first we wanted to give the tree a voice with giving it the ability to post likes using its youtube account. Where in- and output would take place in the same root network. But is a tree able to receive information a we can? And if so, what will it do with it? We wanted to stay in touch with the scientific evidence of the talking trees and decided to focus on an other application within the field of human-tree communication.

An other desire of our team was the fact that we wanted to present a consumer product taking the role as a company trying to sell our product for the global market. After researching other products concerning plants and trees, we found the biodegradable Bios urn. An urn which use as a pot to plant a tree of plant, which can later can be buried in the ground somewhere. This product inspired us to use this example as our physical part of the project. So we wanted to construct a smart vase which had the technical ability to sense the chemical secretion from the roots and convert these to a positive or negative output. The input would also take place using the build-in sensors in the vase using a wireless internet connection.

Documentation

Cybernetic Prosthetics

MIDAS

Research

We started this project with a big brainstorm around different human senses. Looking at researches and recent publications, this article from the guardian (No hugging: are we living through a crisis of touch?) raised the question of touching in our current state of society. What we found intriguing about this sense is how it is becoming more and more repressed to the technological interfaces of our daily life. It is becoming a taboo to touch a stranger but it is considered normal to walk around the streets holding our idle phone. Institutions are also putting regulations on what is considered appropriate contact between professionals and patients. For example, if a reaction to a bad news used to be a hug, nowadays it is more likely to pat somebody on the shoulders than to have such a large area connection with each other.

Based on the above-mentioned article we started to search for more scientific research and projects around touch and technological surfaces to gain insight into how we treat our closest gadgets. This recent article from Forbes magazine relates the research of Alicia Heraz from the Brain Mining Lab in Montreal (This AI Can Recognize Anger, Awe, Desire, Fear, Hate, Grief, Love ... By How You Touch Your Phone) who trained an algorithm to recognize humans emotional pattern from the way we touch our phone. In her official research document (Recognition of Emotions Conveyed by Touch Through Force-Sensitive Screens). Alicia reaches the conclusion:

- "Emotions modulate our touches on force-sensitive screens, and humans have a natural ability to recognize other people’s emotions by watching prerecorded videos of their expressive touches. Machines can learn the same emotion recognition ability and do better than humans if they are allowed to continue learning on new data. It is possible to enable force-sensitive screens to recognize users’ emotions and share this emotional insight with users, increasing users’ emotional awareness and allowing researchers to design better technologies for well-being."

We looked at current artificial intelligence models trained on senses and we recognized the pattern that Alicia also mentioned: there is not enough focus on touch. Most of the emotional processing focuses on facial expressions through computer vision. There is an interesting distinction about how private we are about somebody touching our faces but the same body part has become a public domain through security cameras and shared pictures.

With further research into the state of current artificial intelligence on the market and in our surroundings, this quote from the documentary (More Human than Human) captured our attention

- " We need to make it as human as possible "

Looking into the future of AI technology the documentary imagines a world where in order for human and machine to coexist they need to evolve together under the values of compassion and equality. We, humans, are receptive to our surroundings by touch thus we started to imagine how we could introduce AI into this circle to make the first step towards equality. Even though the project is about the extension of AI on an emotional level we recognized this attempt as a humanity-saving mission. Once AI is capable of autonomous thoughts and it can collect all the information from the internet our superiority as a species is being questioned and many specialists even argue that it will be overthrown. That is why it is essential to think of this new relationship in terms of equality and feed our empathetical information into the robots so they can function under the same ethical codes as we do.

Objective

From this premise, we first started to think of a first AID kit for robots from where they could learn about our gestures towards each other expressing different emotions. The best manifestation of this kit we saw as an ever-growing database which by traveling around the world could categorize not only the emotion deducted from the touch but also a cultural background linked to geographical location.

For the first prototype, our objective was to realize a working interface where we could make the process of gathering data feel natural and give real-time feedback to the contributor.

Material Study

We decided to focus on the human head as a base for our data collection because, on one hand, it is an intimate surface for touch with an assumption for truthful connection, on the other hand, the nervous system of the face can be a base for the visual circuit reacting to the touch. The first idea was to buy a mannequin head and cast it ourselves from a softer more skin-like material that has a soft memory foam aspect. Searching on the internet and in stores for a base for the cast was already asking so much time from us that we decided on the alternative of searching for the mannequin head in the right material. We found such a head in the makeup industry, used for practicing makeup and eyelash extensions.

Once we had the head we could get on to experiment with the circuits to be used on the head not only as conductors of touch but also as the visual center points of the project. First based on the nervous system we divided the face into forehead and cheeks as different mapping sites. Then with a white paper, we looked at the curves and what the optimal shape on the face looks like folded out. From a rough outline of the shape, we worked toward a smooth outline and then we used offset to get concentric lines inside the shape.

To get to the final circuit shapes we decided on the connection points with the crocodile clips to be on the end of the head and underneath the ears. With 5 touchpoints on the forehead and 3 on each side of the face, the design followed the original concentric sketch but added open endings in form of dots to the face.

Not only the design was challenging for the circuits but also the use of the material. For the first technical prototype in which we used a grid of 3x3 to test the capacitive sensor, we used copper tape. Although this would have been the best material to use in terms of conduciveness and instead sticking surface the price for copper sheets big enough for our designs exceeded our budget and using copper tape would have meant assembling the circuit from multiple parts.

The alternative material was gold ($$$$), aluminum ($) or graphite ($). Luckily Tom had two cans of graphite spray and we tried it on paper and it worked. We tested it with an LED - it is blinking by the way. We cut the designs out with a plotter from a matte white foil and then sprayed the designs with the graphite spray. After we read into how to make the graphite a more efficient conductor we tried the tip to rub the surface with a cloth or cotton buns. The result was a shiny metalic surface that added even more character to the visual of the mannequin head.

Technical Aspects

For the technical working for the prototype, we used an Arduino Uno and the MPR121 Breakout to measure the capacitive values of the graphite strips. The Arduino was solely the interpreter of the sensor and the intermediate chip to talk to the visualisation we made for Processing. To make this work, we rebuilt the library that was provided on the Adafruit Github (link to their Github) to be able to calibrate the baseline-values ourselves and lock them. We also built-in a communicative system for Processing to understand. These serial messages could then be decoded by Processing and be displayed on the screen (as shown in the 'Exhibition' tab).

To give a proper look into the programs that we wrote, we want to publish our Arduino Capacitive Touch Interpreter and the auto-connecting Processing script to visualise the values the MPR121 sensor provides.

Files

Media:Rippleecho_for_cts.zip (bestandgrootte: 485Kb)

Media:Capacitive-touch-skin.ino.zip (bestandsgrootte: 3Kb)

Exhibition

It has been scientifically proven that humans have the ability to recognize and communicate emotions through an expressive touch. A new research proved that force-sensitive screens through machine learning are also able to recognize the users` emotions. From this starting point, we created Midas. Midas is designed to harvest a global database of human emotions expressed through touch. By giving up our unique emotional movement machines can gain emotional intelligence leading to an equal communicational platform.

Through adding touch as an emotional receptor to Artificial Intelligence we upload our unspoken ethical code into this new lifeform. This action is the starting point for a compassionate cohabitant.

How to be Human

By Sjoerd Legué (sjoerdlegue@gmail.com)

Student Product Design (0902887)

Sensitivity Training

For me, Digital Craft started with the Sensitivity Training. As a team we were assigned a certain analog sensor, where the question was how the relation could be found between man and machine.

Research:

We chose a heat-sensor as subject. During the research we found an interesting contradiction. An electronic and human heat sensor work kind of the same with electronic impulses. What makes them different is the perception and procession of the ‘data’.

The human brain and it’s sensors have a built-in limit and idea of what is (too) hot or not. An electrical sensor does not. This concept is what we wanted to explain in the series of three videos.

Video 1

The first video explains that raw sensor data can be perceived in multiple ways. The numbers on itself don’t say anything that humans can understand. And when you want to understand them then there are multiple types of measurement where temperature can be defined in (Celsius, Fahrenheit and Kelvin).

Video 1

Video 2

Our second video is a sequel to the first one, where we display heat as a human-understandable way. We found out that humans can measure heat between -15 and 68 degrees Celsius. Cells and the nerve system will damage under and above these temperatures. We tried to visualise this by displaying temperature in grayscales, where black is the coldest and white the hottest measureable temperature.

Video 2

Video 3

And the third and last video is another step towards the relativity of electronic and human sensors. We combined everything we’ve learned and found before into a small installation where we wanted to try to introduce the Herzian Tales principle. Creating unease when trying to use the device we’ve built. This is done by applying a score to holding your hand above a flame. This will be painful after some time, but to achieve a higher score you will have to endure that.

Video 3

Installation

As a final piece to How to be Human we made the theory we learned into a physical piece. During the video research we found out that there are a lot of different methods to measure heat. One of these methods was through thermo-sensitive material. This, often plastic, material contains thermochromic paint that reacts to a combination of moisture and heat. The filament we found turns transparent when it 'detects' heat. The fact that the material becomes slightly translucent could be used to make image or text appear and disappear. After trying some samples and showing them to others, we found out that the material on itself was already interesting.

The shape of the object that we made is a reference to the so called human magnetic field. This torus shape thus also the shape we wanted to go with. Not only because it is a reference to human energy but also because the shape allows itself to be touched with the entire hand and also allows fingers to be moved across the shape.

For our presentation, we decided to also make a poster. The title 'energy flows were the attention goes' gives the user some kind of mystery in touching the object and figure out what it does. This installation basically registers the influence of your body heat for a short amount of time as some kind of analog heat sensor.

Mind of the Machine

This project is all about artificial intelligence, computer creativity and machine learning algorythms.

Research:

In the lecture of Boris Smeenk and Arthur Boer we learned about an algorithm that reconstructs images taken from a datasheets, collected from different sources on the internet. This algorithm processes large datasets in where it tries to learn about it's composition, color and shapes. With the help of a discriminator, the algorythm generates new images based on what it has learned from those feeded sets of data. Every iteration that is runs it tries to recreate an image that looks or can be defined as something that the sourcedata also could have given to the algorythm.

Based on this theory we learned, we fed the algorythm 500 different kinds of Pokémon. The logic behind this is that Pokémon all look very similar in style, with their black borders, vibrant color use and cartoon-like style. With as goal to try to generate the new generation of Pokémon!

In the sheet with the little 'previews' of the generated Pokémon, you can clearly see that it makes a good shape reference to actual Pokémon. The used colors, black lines and amorph shapes can clearly go as proper inspiration for new figures. The most interesting things happen actually in the pattern sheet. This big sheet allows for bigger (mashed up) Pokémon to be generated, which clearly show a better outcome than the smaller version. We got very inspired by how accurate the program was, with just even over 500 different images.

Showpiece

As a showpiece we decided that we would play with the idea of the Pokémon as the dataset. We found out that there actually exists a figure called 'Ditto'. This Pokémon can shapeshift in other ones, which was an interesting reference to our newly generated ones. We decided to make selection of the dataset and cut these out in fluorescent cardboard. By layering all these shapes, new Pokémon would be created every time you would turn a page. Kind of like a manual version of the algorythm we used to created the digitally generated Pokémon.

Project Awareness

By Sjoerd Legué (sjoerdlegue@gmail.com)

Student Product Design (0902887)

Individual Project

Change of Direction:

In the brainstorm section, I've described that I wanted to do something with Open Data. This has changed, the current project does have stuff to do with data, but more with the processing of it. In september there is "De week van de Eenzaamheid" in Rotterdam. This event addresses the fact that loneliness is a serious issue in the Netherlands and thus requires needful attention. For this event, I have been asked to come up with a digital installation that makes people aware of the fact that loneliness can be something very close to everyone.

Concept:

The concept of my project is to address people personally, through a live videofeed and animation, that people themselves can help prevent loneliness amongst elderly around them. This is addressed through asking people personal questions about (for instance) when they have called their own grandma or grandpa.

Medium:

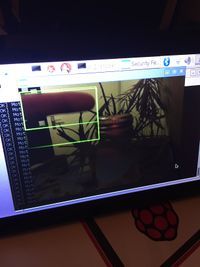

To reach people personally for this concept I want to process a live video feed (that is positioned on the first floor of Rotterdam Station) and track traveling people that are at the station. The video feed is going to be beamed on a canvas that is visible for everyone who walks at the station, or is visiting the website of our foundation (omaspopup.nl). For the project I am going to use a small single-board computer like the Raspberry Pi and it's compatible camera. I have chosen for this method because the entire project has to be small in size, to be able to fit underneath the canvas.

Documentation

Hardware

Raspberry Pi (model 3B+):

As described above, using a small processing computer allows me to use the installation in a narrow space. I also won't prefer placing a very expensive computer like a Mac to be placed in such a public space.

RasPi Camera (rev. 1.3):

This is the native supported webcam for the Raspberry Pi. This on-board camera can be connected to the computer very easily and is broadly supported across all of it's software.

RasPi 7" Touchscreen Display:

I use this touchscreen to display and read information directly from the Raspberry Pi itself. The computing board is attached on the back of the display, which makes the format of the installation even smaller.

Software

Python:

The entire program that drives the camera, and the recording/generating of the images, is written in Python. This programming language is quite easy to learn and is also very readable. I do have a lot of experience with other web-based programming languages, but I have never worked in Python before. So as an unexperienced programmer in Python, the language is very much recommendable for people who are new in programming.

Dependencies

Raspbian Jessie:

Before installing any dependencies, do not forget to update and upgrade the Advanced Packages Tool.

sudo apt-get update

sudo apt-get upgrade

reboot

OpenCV 3:

OpenCV is a library that allows easy access for image manipulation and processing. Installing it for the Raspberry Pi takes quite some time and can be difficult for people who are new into Linux. I used this tutorial for the complete installation. This website (pyimagesearch.com is also very helpful and has an extensive set of tutorials about OpenCV + Python on the Raspberry Pi.

PiCamera:

This is the driver of the on-board camera. No need for installing the package, it comes natively with Raspberry OS Jessie. When having your camera connected, check availability like this:

vcgencmd get_camera

Matplotlib:

For the creation of the GIF image, additional libraries were needed. Matplotlib is a plotting library that supports the animation of GIF's. Although the dependency can be quite slow, it has excellent native support for OpenCV and PiCamera. Installing it did not take very long, I used this small tutorial for installing the libraries. Do not forget to install ImageMagick library too when using this, it is not described anywhere in the tutorial. Small topic on the subject here.

sudo apt-get update

sudo apt-get install imagemagick

Python, JSON:

All installed natively, I think. If not, just give the error a Google search and browse some forums.

Process

Collecting Data

The first step in my process was to collect data through video. I have tried multiple other things, before settling with the Raspi Camera module. Other video recorders, like a generic webcam and the Microsoft Kinect did not work properly with the Raspberry Pi. They would require a lot of tweaking and fiddling around before being able to use them. This was the moment I stumbled upon the Raspberry Pi Camera module. I played around with the source from pyimagesearch.com that I found and did some research on the camera and it's documentation. At this point, I was still working in Node.js. But that programming language did not match my requirements and could not 'communicate' as easily with the camera module as Python would.

Before switching to Python, I have been trying to create a proper setup for collecting some datasets from within my living room.

Writing Code

After having made the decision to switch to Python, I have been searching on the internet for other similar projects. I always think it is better to learn from something similar, then to try reinventing everything all over again. I stumbled upon a project from a Belgian person named Cedric Verstraeten. He has made a motion detection system for OpenCV in C++ (see github link). Some of his elements (like the motion blocking) is very clever and has been the inspiration for me to take on a similar approach. I combined the ideas of Cedric and pyimagesearch.com into my own Python-based motion detection system.

Testing

The image on the right is a snapshot of an early prototype of the detection system. Since that version a lot of filtering and calculating has been added. To be able to predict motion and detect wether motion in two frames are the same requires complex calculations and a lot of loops. At this point, the system is capable of assigning ID's to certain motion regions, excluding zones from being able to 'have' motion, assign persistency to motion regions and track persistent motion.

Finetuning

To be able to say that the code will work in the final location, a test in Rotterdam Central station was mandatory. Taking the project with me was not possible, since there are no public power sockets available at the stations. I had to video capture the hallway on my phone and downscale the files to how the Raspberry Pi camera would capture the scene. With these recordings, I have fine tuned the delta (motion difference) and persistency thresholds. This means that when placing the installation at the station, it should work as programmed.

Code

Setup:

All used code files are within this .zip file. Just extract them, navigate to the location and run motion.py as administrator.

cd motion/

sudo python motion.py

Files

Media:Motion.zip (bestandgrootte: 2Mb)

Brainstorm

The moment I knew what I wanted to do:

For a long time I have known that Product Design was the right decision for me to choose. This also means that there was or is not a certain kind of image that reflects this moment for me. But IKEA has always inspired me to look at products (in the literal term) in a very different way. For instance the IKEA Concept Kitchen (IKEA Concept Kitchen 2025).

What do I make?

Although I have chosen for product design, my background lays in graphic- and digital design. Together with Emmy Jaarsma, I have made several hat designs over the course of a few years. Our best work even won the International Hat Designer of the Year award in 2013. For the hat, I mainly designed the digital pattern for the laser-cutter.

Topic of Interest:

For this practice I want to explore the concept of Open Data. It fascinates me that a lot of information is freely accessible on the internet to be used and processed and that everyone can contribute to this gigantic heap of information. Almost everything is on the internet, at least when you search for it with the right parameters.

Medium:

The main component I wish to use is a microcontroller (or small computer) with access to the internet. It should be able to search for the information by itself and process that into a visual attractive element that is easily readable by human beings. A good example of a very cool project is the Magic Mirror. It's just like a regular mirror, but it displays important information for the consumer to know.

Result

At the final stage of the development Project Awareness is calculate and record a lot of elements. The project is not fully complete, but the most difficult part of the video installation is complete. A brief preview of the execution of the software:

Before the project can be displayed at the station in Rotterdam, a lot of the visual part has yet to be designed. In my opinion, the best way to design the visuals is to create a dynamic HTML5 + JavaScript website that communicated with an other Python script that pushes new GIF files to the site. By using a website the generated GIF's can be 'prettified' a lot easier than with Python. Combining CSS with JavaScript creates a dynamic and good cross-platform framework to be able to spread the message as good as possible.