Difference between revisions of "User:Tomschouw"

| Line 193: | Line 193: | ||

A laser is a lightsource that travels a small coherent. Because of that lasers behave monochromatic. In contrast to other light sources that most of the time have a wide spectrum, wavelength and fase. | A laser is a lightsource that travels a small coherent. Because of that lasers behave monochromatic. In contrast to other light sources that most of the time have a wide spectrum, wavelength and fase. | ||

Also laserlights always brings light forwards that doesn't converges or diverges. | Also laserlights always brings light forwards that doesn't converges or diverges. | ||

| + | |||

| + | ---- | ||

The word laser is abbreviation which stands for: | The word laser is abbreviation which stands for: | ||

| Line 220: | Line 222: | ||

File:Testlaser4.jpg | File:Testlaser4.jpg | ||

</gallery> | </gallery> | ||

| + | |||

| + | All these test | ||

Revision as of 11:49, 17 December 2018

Contents

Cybernetics: Working with self-organizing systems

Critical Making

Reimagine an existing technology or platform using the provided sets of cards. In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'.

Group project realised by Sjoerd Legue , Tom Schouw, Emiel Gilijamse, Manou, Iris and Noemi Biro

Treeincarnate

In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'.

Research

We used this random picked cards to setup our project and brainstorm about the potential theme. Almost immediately several idea's popped up in our mind, the one becoming the base of our concept being the writings of Peter Wohlleben, a German forrester who is interested in the scientific side of trees communication with eachother. The book he wrote, The Hidden Life of Trees became our main source of information. Basically what Wohlleben is researching is the connection between trees to trade nutrions and minerals in order for their fellow trees, mostly family, to survive. For example the fact that older, bigger trees send down nutrions to the smaller trees closer to the surface which have less ability to generate energy through photosyntisis.

Examples

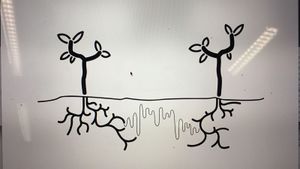

Using their root network to send and receive nutrians. But not completely by themselves, the communication system, also known as the 'Wood Wide Web', is a symbiosis of the trees root network and the mycelium networks that grow between the roots, connecting one tree to another. The mycelium network, also known as Mycorysol, is responsible for the succesfull communication between trees.

Other scientist are also working on this subject. Suzanne Simard from Canada is also researching the communicative networks in forests. She is mapping the communication taking place between natural forests. Proving the nurturing abilities of trees, working together to create a sustainable living environment. A network where the so called 'mother-trees' take extra care for their offspring but also other species, by sharing her nutricions for those in need.

Artist, scientists and designers are also intregued by this phenomenon. For example Barbara Mazzolai from the University in Pisa has had her work published in the book 'Biomimicry for Designers" by Veronika Kapsali. She developed a robot inspired by the communicative abilities of trees and mimicking their movements in the search for nutricions in the soil.

Bios Pot

The idea of presenting this project within a business model, introduced us the the company Bios. A Californian based company which produces biodegradable urn which can become a tree after planting the urn in the soil. We wanted to use this concept and embed this in our project. The promotional video could provide us with interesting video material for our own video. Besides the material, we were inspired by their application that was part of their product.

Concept

Using the research about the Wood Wide Web and the ability of trees to communicate with each other. We wanted to make the tree able to communicate with us. Using the same principle of sending different kinds of nutricion depending on what the tree wants to communicate, such as 'danger' or 'help me out'. We wanted to let the trees talk to us. Using a digital interface logged into the root network of the tree and communicate with each other.

At first we wanted to give the tree a voice with giving it the ability to post likes using its youtube account. Where in- and output would take place in the same root network. But is a tree able to receive information a we can? And if so, what will it do with it? We wanted to stay in touch with the scientific evidence of the talking trees and decided to focus on an other application within the field of human-tree communication.

An other desire of our team was the fact that we wanted to present a consumer product taking the role as a company trying to sell our product for the global market. After researching other products concerning plants and trees, we found the biodegradable Bios urn. An urn which use as a pot to plant a tree of plant, which can later can be buried in the ground somewhere. This product inspired us to use this example as our physical part of the project. So we wanted to construct a smart vase which had the technical ability to sense the chemical secretion from the roots and convert these to a positive or negative output. The input would also take place using the build-in sensors in the vase using a wireless internet connection.

Documentation

Cybernetic Prosthetics

MIDAS

Research

We started this project with a big brainstorm around different human senses. Looking at researches and recent publications, this article from the guardian (No hugging: are we living through a crisis of touch?) raised the question of touching in our current state of society. What we found intriguing about this sense is how it is becoming more and more repressed to the technological interfaces of our daily life. It is becoming a taboo to touch a stranger but it is considered normal to walk around the streets holding our idle phone. Institutions are also putting regulations on what is considered appropriate contact between professionals and patients. For example, if a reaction to a bad news used to be a hug, nowadays it is more likely to pat somebody on the shoulders than to have such a large area connection with each other.

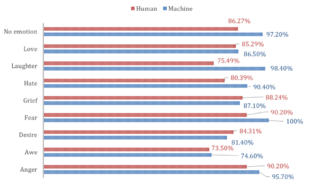

Based on the above-mentioned article we started to search for more scientific research and projects around touch and technological surfaces to gain insight into how we treat our closest gadgets. This recent article from Forbes magazine relates the research of Alicia Heraz from the Brain Mining Lab in Montreal (This AI Can Recognize Anger, Awe, Desire, Fear, Hate, Grief, Love ... By How You Touch Your Phone) who trained an algorithm to recognize humans emotional pattern from the way we touch our phone. In her official research document (Recognition of Emotions Conveyed by Touch Through Force-Sensitive Screens). Alicia reaches the conclusion:

- "Emotions modulate our touches on force-sensitive screens, and humans have a natural ability to recognize other people’s emotions by watching prerecorded videos of their expressive touches. Machines can learn the same emotion recognition ability and do better than humans if they are allowed to continue learning on new data. It is possible to enable force-sensitive screens to recognize users’ emotions and share this emotional insight with users, increasing users’ emotional awareness and allowing researchers to design better technologies for well-being."

We looked at current artificial intelligence models trained on senses and we recognized the pattern that Alicia also mentioned: there is not enough focus on touch. Most of the emotional processing focuses on facial expressions through computer vision. There is an interesting distinction about how private we are about somebody touching our faces but the same body part has become a public domain through security cameras and shared pictures.

With further research into the state of current artificial intelligence on the market and in our surroundings, this quote from the documentary (More Human than Human) captured our attention

- " We need to make it as human as possible "

Looking into the future of AI technology the documentary imagines a world where in order for human and machine to coexist they need to evolve together under the values of compassion and equality. We, humans, are receptive to our surroundings by touch thus we started to imagine how we could introduce AI into this circle to make the first step towards equality. Even though the project is about the extension of AI on an emotional level we recognized this attempt as a humanity-saving mission. Once AI is capable of autonomous thoughts and it can collect all the information from the internet our superiority as a species is being questioned and many specialists even argue that it will be overthrown. That is why it is essential to think of this new relationship in terms of equality and feed our empathetical information into the robots so they can function under the same ethical codes as we do.

Objective

From this premise, we first started to think of a first AID kit for robots from where they could learn about our gestures towards each other expressing different emotions. The best manifestation of this kit we saw as an ever-growing database which by traveling around the world could categorize not only the emotion deducted from the touch but also a cultural background linked to geographical location.

For the first prototype, our objective was to realize a working interface where we could make the process of gathering data feel natural and give real-time feedback to the contributor.

Material Study

We decided to focus on the human head as a base for our data collection because, on one hand, it is an intimate surface for touch with an assumption for truthful connection, on the other hand, the nervous system of the face can be a base for the visual circuit reacting to the touch. The first idea was to buy a mannequin head and cast it ourselves from a softer more skin-like material that has a soft memory foam aspect. Searching on the internet and in stores for a base for the cast was already asking so much time from us that we decided on the alternative of searching for the mannequin head in the right material. We found such a head in the makeup industry, used for practicing makeup and eyelash extensions.

Once we had the head we could get on to experiment with the circuits to be used on the head not only as conductors of touch but also as the visual center points of the project. First based on the nervous system we divided the face into forehead and cheeks as different mapping sites. Then with a white paper, we looked at the curves and what the optimal shape on the face looks like folded out. From a rough outline of the shape, we worked toward a smooth outline and then we used offset to get concentric lines inside the shape.

To get to the final circuit shapes we decided on the connection points with the crocodile clips to be on the end of the head and underneath the ears. With 5 touchpoints on the forehead and 3 on each side of the face, the design followed the original concentric sketch but added open endings in form of dots to the face.

Not only the design was challenging for the circuits but also the use of the material. For the first technical prototype in which we used a grid of 3x3 to test the capacitive sensor, we used copper tape. Although this would have been the best material to use in terms of conduciveness and instead sticking surface the price for copper sheets big enough for our designs exceeded our budget and using copper tape would have meant assembling the circuit from multiple parts.

The alternative material was gold ($$$$), aluminum ($) or graphite ($). Luckily Tom had two cans of graphite spray and we tried it on paper and it worked. We tested it with an LED - it is blinking by the way. We cut the designs out with a plotter from a matte white foil and then sprayed the designs with the graphite spray. After we read into how to make the graphite a more efficient conductor we tried the tip to rub the surface with a cloth or cotton buns. The result was a shiny metalic surface that added even more character to the visual of the mannequin head.

Technical Aspects

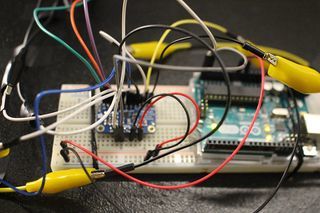

For the technical working for the prototype, we used an Arduino Uno and the MPR121 Breakout to measure the capacitive values of the graphite strips. The Arduino was solely the interpreter of the sensor and the intermediate chip to talk to the visualisation we made for Processing. To make this work, we rebuilt the library that was provided on the Adafruit Github (link to their Github) to be able to calibrate the baseline-values ourselves and lock them. We also built-in a communicative system for Processing to understand. These serial messages could then be decoded by Processing and be displayed on the screen (as shown in the 'Exhibition' tab).

To give a proper look into the programs that we wrote, we want to publish our Arduino Capacitive Touch Interpreter and the auto-connecting Processing script to visualise the values the MPR121 sensor provides.

Files

Media:Rippleecho_for_cts.zip (bestandgrootte: 485Kb)

Media:Capacitive-touch-skin.ino.zip (bestandsgrootte: 3Kb)

Exhibition

It has been scientifically proven that humans have the ability to recognize and communicate emotions through an expressive touch. A new research proved that force-sensitive screens through machine learning are also able to recognize the users` emotions. From this starting point, we created Midas. Midas is designed to harvest a global database of human emotions expressed through touch. By giving up our unique emotional movement machines can gain emotional intelligence leading to an equal communicational platform.

Through adding touch as an emotional receptor to Artificial Intelligence we upload our unspoken ethical code into this new lifeform. This action is the starting point for a compassionate cohabitant.

Anatomical Machine Lesson: From Hairdryer to Kruimeldief

Cartography of Complex Systems & Anthropocene

Research

I got inspired for my last project tackle a problem in the way we look at technologie and problem solving for elderly people. Elderly have trouble to compete new technology. This we already now, but for me as a designer I still think that we are designing in a wrong way. We use technologie as we know it and can adjust ourselves to work in harmony with tech. But for people with diseases, limitation, or just dementia is living with technologie totally different and in my opinion, cold.

To show you a concrete example: I Googled for Elderly and 'Technologie'

What you see here is elderly who is contact with a some kind of robot. And this robot is doing some kind of social interaction or just getting some health updates. Who would like to grow up in a world when this is state of living in the future? I would like to see this different. And I think this is a major chance for product designers to design this technologie in a warm and logical way for elderly. Nowadays there a more then 270.000 people with dementia in the Netherlands, and every hour 5 people will start suffering and add up to this climbing number. In 2040 its number will add up to 500.000. Although dementia mainly affects older people, it is NOT a normal part of ageing. This is often a miss conception. Worldwide, around 50 million people have dementia, and there are nearly 10 million new cases every year. Alzheimer's disease is the most common form of dementia and may contribute to 60–70% of cases.

If we look at other fields where they maybe will find a solution, unfortunately also no progress to find. Another thing is that we cant build on the pharmaceutical industry. They recently made the decision to give up the search for a Alzheimer's cure. They made this decision because they thought it was unlikely to find succes in doing research the next up coming years. https://qz.com/1282482/why-the-pharmaceutical-industry-is-giving-up-the-search-for-an-alzheimers-cure/ We simply cant prevent people form getting dementia.

I want to step in this gap as a designer and create a bridge between elderly people and technologie. Not all technologie is suitable for this problem. I want to create a frame work that brings the focus to support and improve the well-being of people through the deployment of technologie which is user-friendly, not intimidating and personally reinforcing.

This is my Grandma. She suffers from dementia. Dementia is a horrible disease, but there a lot of different forms. Dementia is a general term for a decreasing rate in mental ability severe enough to interfere with daily life. One example is Memory loss. Alzheimer's is the most common type of dementia. About 60 to 80 people suffer from Alzheimer out of these cases.

Symptoms of dementia - Communication and language - Ability to focus and pay attention - Reasoning and judgment - Visual perception

When these problems get to big elderly get thrown in to a nursing home. The main problem is that people with dementia stop doing everyday activities when they end up in a nursing home. Daily activities such as: - Cooking - Hygene - Eating/Drinking - Moving - Going out

These daily activities help you using with the unconscious linking of memories... Without these dementia gets worse.

Laser

When i was a child i became obsessed with a dilemma. My teacher told the class a story about a kid that found 2 infinite lasers. He decided to point the lasers in to the sky with is arms stretch with a little angle. The lasers would travel outwards more and more as the light travels through infinite space. The question is.... Do the 2 lasers ever meet again and cross?

This question really cracked my brain. I tried to draw what happened and how the pattern would look, but i didn't succeed.

What are lasers? For years lasers have been a hallmark of science fiction, yet much of our technology depends on them. Range finding devices, optical communication, and more daily interaction when you doing shopping, barcodescanners. The unique characteristics of laser light make all of these thing possible.

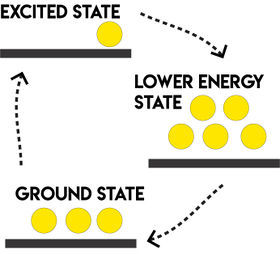

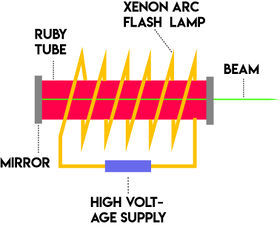

In 1960 Ted Maiman demostrated the first laser by taking a cylinder of ruby and surrounding it with a xenon arc flash lamp used in aerial photography. An intense burst of light from the map makes a lasing. A normal lamp flash would promote a few electrons from the ground state to an excited state. They lose a bit of energy fall to a lower energy state without bringing light and drop back to the beginning to the ground state and giving off a burst of light. The light produced would be incoherent light a spectrum of colors and intensities just as my small green laserpencil later in this wiki.

To create a laser takes an extremely powerful lamp. In the ruby laser repeated flashes called pumping make something amazing happens. They supply so much energy that a 'population inversion' occurs. This means that more electrons in the energy level make the same loop. Ground state -> Excited state -> Lower energy state -> Beam! This beam releases light that starts an avalanche called 'stimulated emission'. The photon produced when an electron decays induces other excited electrons to simultaneously decay and release nearly indentical photons. That creates coherent light meaning that the crests and troughs of every light wave in the beam match up. At this point we have coherent light. but not yet the other two properties of laser light. To get a narrow beam with all the light rays parallel and of a nearly single wavelength requires an addition to the ruby rod. Maiman silverd the end to reflect the light within the ruby cylinder. He made the two end of the rod super parallel to each other. Two things happen. First any light that rays that dont line up with the axis eventually just exit out the side of the cylinder. And the light parallel to the axis become intensified and narrowed in wavelength. The mirrored ends create a standing wave which means only light of particular wavelengths can exist inside the cavity. A small hole in one of the mirrors or partially silvered mirror allows the light to escape creating the familiar beam. Since the ruby laser lasers have become easy and cheap to manufacture. Lucky i can buy my laserpencil for under 10 bucks.

A laser is a lightsource that travels a small coherent. Because of that lasers behave monochromatic. In contrast to other light sources that most of the time have a wide spectrum, wavelength and fase. Also laserlights always brings light forwards that doesn't converges or diverges.

The word laser is abbreviation which stands for: Light Amplification Stimulated Emission Radiation

The laser has a long history but it became much more developed during the second world war, just like all discoveries. There are many different types of lasers. The laser medium can be a solid, gas, liquid or semiconductor. Lasers are commonly designated by the type of lasing material employed:

Solid-state lasers have lasing material distributed in a solid matrix (such as the ruby or neodymium:yttrium-aluminum garnet "Yag" lasers). The neodymium-Yag laser emits infrared light at 1,064 nanometers (nm). A nanometer is 1x10-9 meters.

Gas lasers, also known by the cutting lasers. They have a primary output of visible red light. CO2 lasers emit energy in the far-infrared, and are used for cutting hard materials. Also fluorescent tubes use the same technique.

Excimer lasers (the name is derived from the terms excited and dimers) use reactive gases, such as chlorine and fluorine, mixed with inert gases such as argon, krypton or xenon. When electrically stimulated, a pseudo molecule (dimer) is produced. When lased, the dimer produces light in the ultraviolet range.

Dye lasers use complex organic dyes, such as rhodamine 6G. They have tunable wavelengths.

Semiconductor lasers sometimes called diode lasers, are not solid-state lasers. These electronic devices are generally very small and use low power. They may be built into larger arrays, such as the writing source in some laser printers or CD players. Seen in allot of digital devices we use nowadays.

All these test