Difference between revisions of "Computer vision narratives"

(→Movies) |

|||

| Line 171: | Line 171: | ||

$ convert *.jpg <name>.gif | $ convert *.jpg <name>.gif | ||

| + | </pre> | ||

| + | # montage for WCIBS | ||

| + | <pre> | ||

| + | montage *in*.jpg -tile 5x5 -geometry 1280x720+5+5 -background '#000000' out.jpg | ||

</pre> | </pre> | ||

Latest revision as of 07:49, 11 June 2018

Contents

- 1 Forward/Introduction

- 2 Abstract

- 3 Central Question

- 4 Relevance of the Topic

- 5 Hypothesis

- 6 Research Approach

- 7 Key References

- 8 Literature

- 9 Experiments

- 10 Insights from Experimentation

- 11 Artistic/Design Principles

- 12 Artistic/Design Proposal

- 13 Realised work

- 14 Final Conclusions

- 15 Research DOC

- 15.1 Assignment or self formulated project description

- 15.1.1 Central question

- 15.1.2 Subquestions

- 15.1.2.1 What's the difference between seeing with human eyes, and seeing with computer eyes?

- 15.1.2.2 How can I use computer intelligence as co-operator of a new narrative?

- 15.1.2.3 How can a computer see new forms/interpretations in abstract artworks?

- 15.1.2.4 How observes computer intelligence the real world?

- 15.2 Set up of my research

- 15.3 Set up of your practice project

- 15.4 Role of your external partner

- 15.5 Economic aspects

- 15.6 Station you choose to work in

- 15.7 Planning and agenda

- 15.1 Assignment or self formulated project description

Forward/Introduction

Abstract

Central Question

What if a machine can impose actions to a human?

How can I insinuate how machines/camera's/humans unasked monitoring what you're doing?

Can the machine see more than humans do? (create a fake world)

Can I built a world where there is no trace of reality? (AR/VR)

Can I create a machine that is creating stories out of what he is seeing?

What if the machine is creating stories based on

What if a machine (can recognize a person and) can give commands to a person?

infinite image rhyme

Relevance of the Topic

Hypothesis

Research Approach

Key References

Netherlands

The police is testing with camera's the highways to check if people obey the law. By using object recognition they can recognise the car, liscense-plate and windshield. The camera images from drivers who obey the law, will be destroyed immediately. For dutch principles that's good, but if you want to improve the object-recognition software, you also need images from drivers who doing it right so you get two groups. It is about the decision between technical improvement of privacy of citizens.

China

In China they do it way smarter than in the Netherlands. They keep all the infomation they get to improve their systems. Technical improvement is a way higher priority than privacy, so in China's case the decision is already made.

Sleepwet

Literature

4-D internet. Het internet wat we nu kennen is natuurlijk online aanwezig maar ook offline. Bijvoorbeeld iets uploaden wat tegen de regels is, waardoor er de volgende dag iemand op de stoep staat. Eten bestellen waardoor er binnen 20 minuten een mens op een fiets voor je deur staat met je eten. Deze manier van leven is het internet niet te ontwijken. Overal is de confrontatie aanwezig. Het internet is niet meer alleen een raam naar de online wereld, maar de hele omgeving is het internet geworden. Dit is hoe het tegenwoordig in elkaar zit, naast dat kinderen in groep 3/4 les krijgen in hun eigen moeder taal wordt het ook noodzakelijk om de taal van de computer te spreken. Lessen in coderen. Ik ben van mening dat dit voor de volgende generatie mensen echt belangrijk is omdat alleen dan deze nieuwe generatie niet onwetend wordt van wat voor kracht de machines in ons dagelijks leven hebben. Als de nieuwe generatie niet door heeft hoe deze machines te werk gaan lijdt dit tot een on

Narrative intelligence.

De mens geeft betekenis aan de wereld door middel van verhalen. Mensen zijn nieuwsgierig naar hoe andere mensen hebben geleefd, en vertellen dergelijke verhalen aan elkaar door.(wellicht met hun eigen interpretatie er bij zodat het verhaal steeds een beetje vervormt.) Om machines betekenis te laten geven aan de wereld zijn er ook veel mensen bezig om machines verhalen te laten lezen, leren te begrijpen en vervolgens zelf verhalen te laten schrijven. Narratief kan er voor zorgen om mensen ergens mentaal mee naar toe te nemen en voor iedereen is dit verhaal in hun hoofd anders. Iedereen geeft een andere kleur, vorm of patroon aan het verhaal, en zo genereert dat voor dat specifieke persoon een eigen werkelijkheid. Narratief kan ook een leiding hebben over iemands leven, denk aan religie waar elk verhaal betekenis heeft hoe men zich zou moeten gedragen. Machines hebben daar geen benul van wat een verhaal kan doen. Eerder haalde ik al the Stanley Parable aan, maar dit geeft in weze de essentie van lezer en verteller. Die grens vervaagt in dat spel, je bent eigenlijk in gesprek met elkaar, niet verbaal maar mentaal. Hierdoor ontstaat er een zeer groot web aan verhaal lijnen die oneindig lijken en door elkaar heen vloeien.

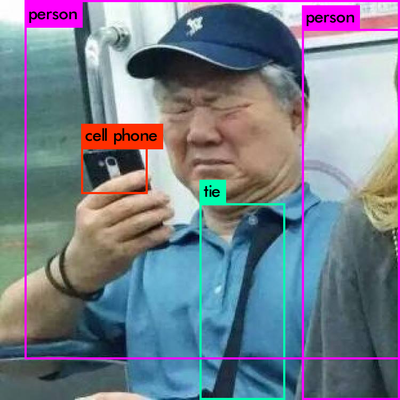

Object detection. The problem is not just about solving the 'what?', it's also about solving the 'where?'

The difference between traditional and technical images, then, would be this: the first are observations of objects, the second computations of concepts. The first arise through depiction, the second through a peculiar hallucinatory power that has lost its faith in rules. This essay will discuss that hallucinatory power. - V. Flusser

The Traditional image - observation of objects/concepts - depiction of image dependent of rules

The Technical image - computations of concepts - image with hallucinatory power, independent of rules

Object Recognition

Met object recognition word gebruik gemaakt van een dataset waar de machine op getraind is. Deze dataset bestaat uit plaatjes gesorteerd op categorie en zijn allemaal gelabeld in de juiste class. Je kan zelf kiezen hoe vaak en hoe lang je hem laat trainen. Hoe langer, hoe beter hij zal herkennen. Zodra hij getraind is kun je de software pas echt gebruiken. Je kan dan foto’s, video’s of live video inladen om te laten herkennen. De software is zeer snel en accuraat. Maar uiteindelijk gaat het allemaal om hoe hij getraind is en met wat voor dataset.

Het idee dat de computer letterlijk kan waarnemen zonder dat er een mens naar het scherm kijkt is bijzonder. In bepaalde contexten kan dit heel handig zijn, zoals met bewakings camera’s, zoektochten naar bepaalde cellen in het lichaam (afbeeldingen van ziektecellen inladen en in het lichaam zoeken naar zulke cellen) maar wat het zo interessant maakt is dat de output gebaseerd is op afbeeldingen die mensen zelf hebben gekozen. De afbeeldingen zijn slechts een referentie voor de computer en zo is het mogelijk om meerdere objecten in een beeld te herkennen. Maar wat als je de input (dataset) eigen maakt? Afbeeldingen uit jou leven. Dan kijkt de computer op de manier hoe jij kijkt. Maar dan wel met object recognition, wat natuurlijk totaal onmenselijk is, maar wel met persoonlijke input van de mens. Deze manier van een computer laten zien is iets wat de mens niet echt zou (willen) kunnen.

Experiments

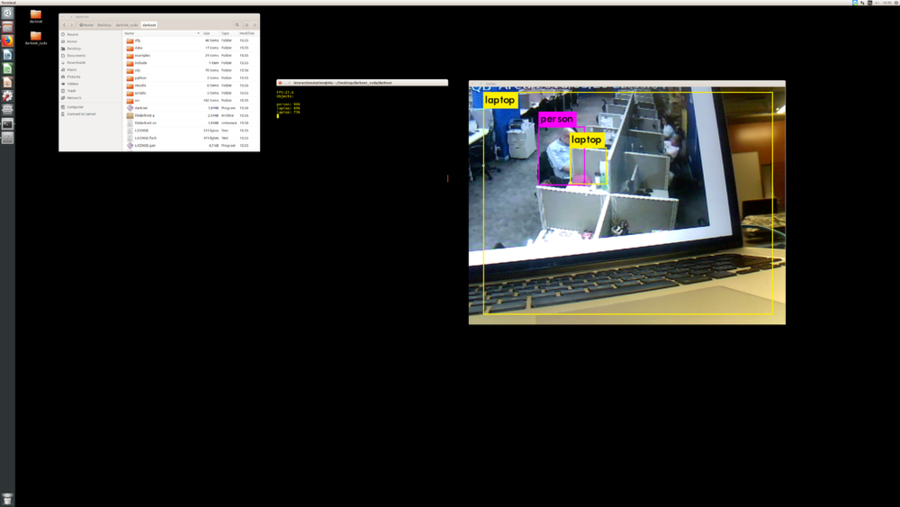

You Only Look Once

Object Recognition

I used a existed code to learn to understand how object detection works, what kind of database it has and what the possibilities are. This kind of computer vision is used in self-driving cars, army drones, surveillance camera's and so on. It makes predictions based on what's in the database. There are several other databases which you can connect to it. COCO is a library that way more images that this software can use to learn to identify more objects. I tried to get this software in real-time on my computer but unfortunately, my graphic card is too low. (or I did something wrong) So now I'm trying to

Currently I started on a simpeler kind of software, motion detection. It can observe any movement in live camera's or video's. This movement can be detected in great detail, but also in less.

Finally I got the real-time object detection working. I did this on a linux machine, because this one is way faster than my Macbook Pro. So what you can see is that it detects a lot of 'objects'. It's drawing bounding boxes on every object it recognise. Also it gives a percentage of how sure the system is. This data can be used to monitoring a current location in a current time. This kind of technique is being used by the chinese goverment to supervise busy crossroads to check if everyone is obeying the rules. If some people don't, they will be on a blacklist. This idea of monitoring citizens from a western perspective is super weird and guarantees no privacy. This way of monitoring the world is tragically overanxious.

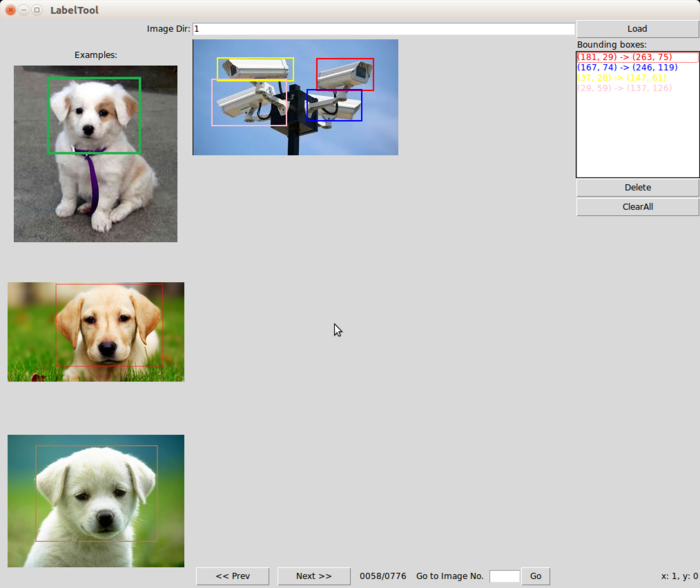

BBOX tool is a tool to create bounding boxes by hand. You can use it to create your own datasets voor Darknet YOLO. I downloaded ±700 images of security webcams and I selected on every image where the cams were exactly. https://www.youtube.com/watch?v=aE1kA0Jy0Xg So after labelling every camera in an image (±700 images) I trained the computer to recognize the camera's. Unfortunately it didn't work. I think because i had also pics in the folder that didn't had a camera in the image, so those images were not used. So I tried the dataset of the tutorial and this dataset did work. Also because the computer is trained very long.

Now I know how I can create my own dataset and with this knowledge opens up new ideas about what this software can do or can be.

Now I know how I can create my own dataset and with this knowledge opens up new ideas about what this software can do or can be.

Nightmare

is actually the same idea of how object detection works, but then backwards. And the output you'll get is really beautiful. The machine reproduce the images, but it look like it's getting editted by a weird photoshop brush. It got eyes everywhere and it looks really dreamy. Nobody expect that this kind of images come out. The idea of letting the computer create their own artworks is really cool. Also you can as user use different segmentation options.

Virtual World recognition

Real-Time recognition

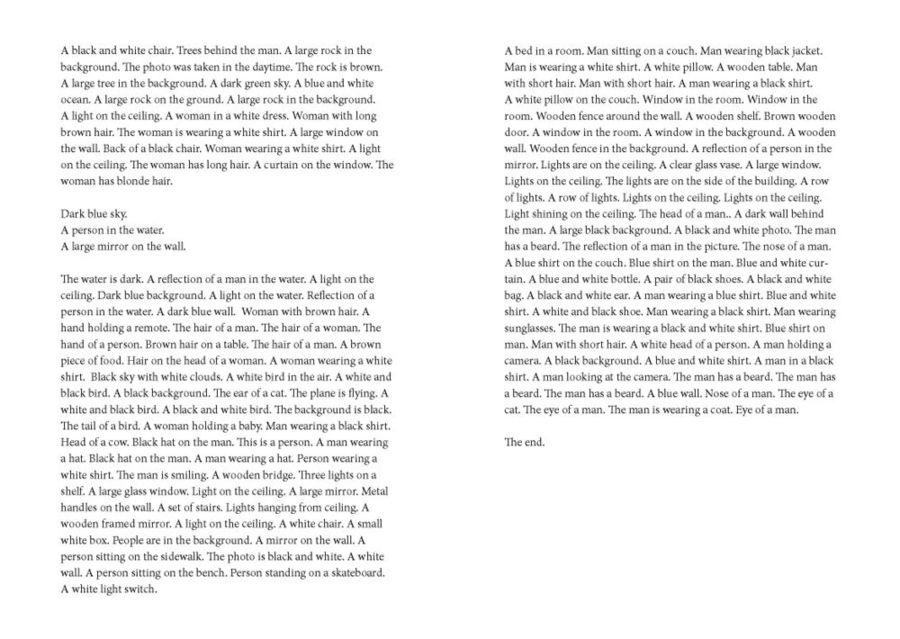

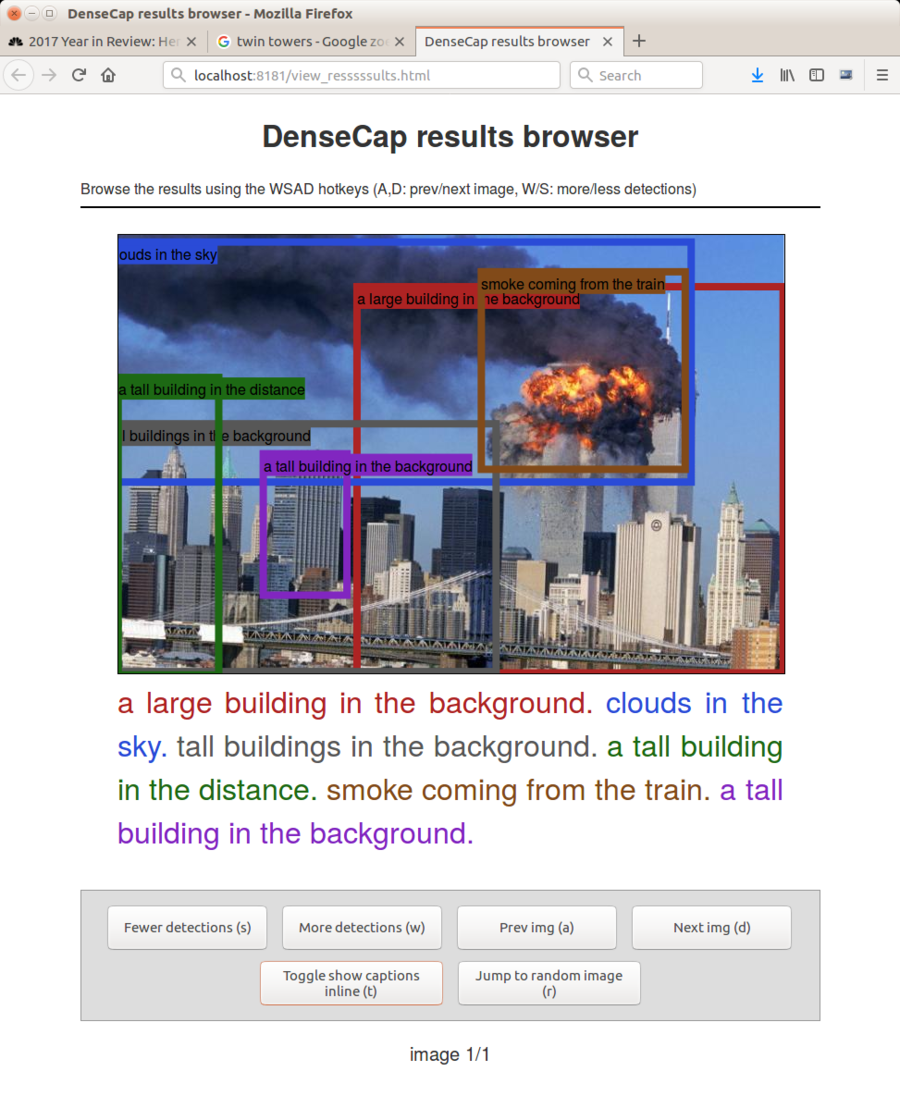

Densecap

[Densecap Github] This software can create dense captions by image by using torch. So it creates assumptions that are close to what is on the image. All those captions are generated by a huge dataset of labelled images. This makes it possible to get close to reality.

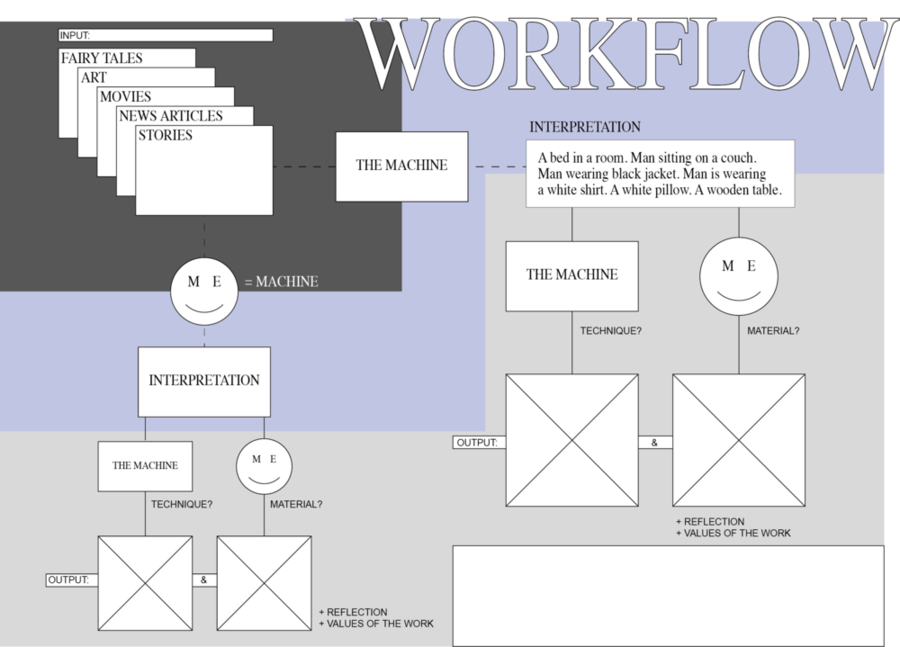

After a talk with Kim, I decided to create a workflow. How to do my experiments and what this experiments could become. This translation of this titanic story is one of the ways to create a work, but when I change the input and reshape the output, it's starting to get more a visual research documentation. This workflow doc I keep in mind to understand where my experiments will go.

Down here starting the experiments based on Densecap and my workflow.

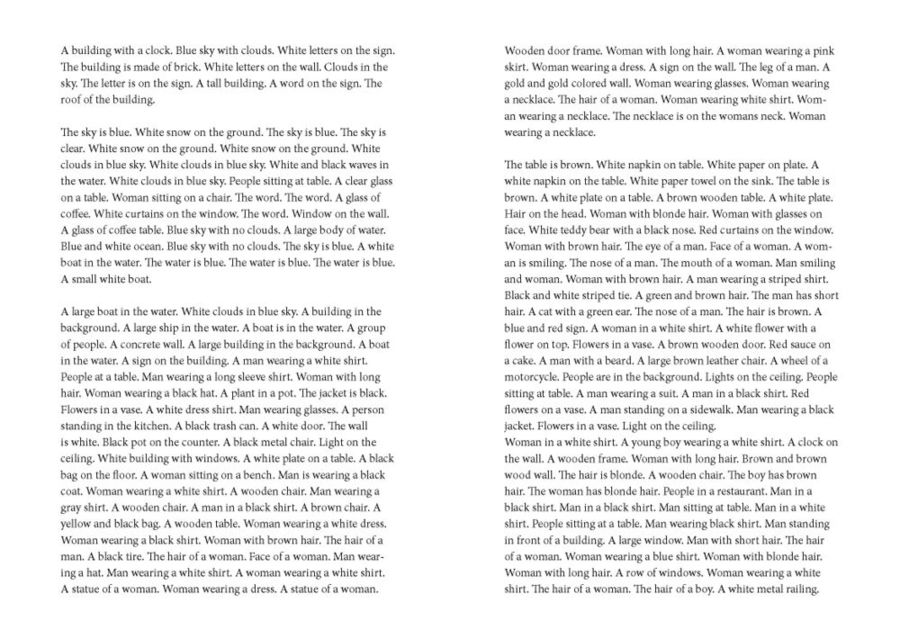

Movies

During this experiment I tried to summarize The Titanic. I took in chronological order 34 screenshots of the movie and I put them in Densecap. The computer created around 10 captions of each screenshot. After that I designed all the text as a book. When you read it, it is really vague what kind of story it is. Some of captions are really good and specific, some are more general. What this could become is a poetic and weird story made out of movie screenshots.

how to create a gif like this:

how to create a gif like this:

#Convert mp4 to jpgs $ ffmpeg -ss 00:00:00 -t 00:00:22 -i <name-of-file>.mp4 -r 25.0 yourimage%4d.jpg

convert mp4 to jpg whole movie each minute a frame

ffmpeg -i <name-of-movie>.mp4 -vf fps=1/60 thumb%04d.jpg

#Put all jpgs into densecap $ th run_model.lua -input_dir path/to/jpgfolder -box_width 3 -num_to_draw 4 -output_dir /path/to/output/folder

#Convert jpg to gif $ convert *.jpg <name>.gif

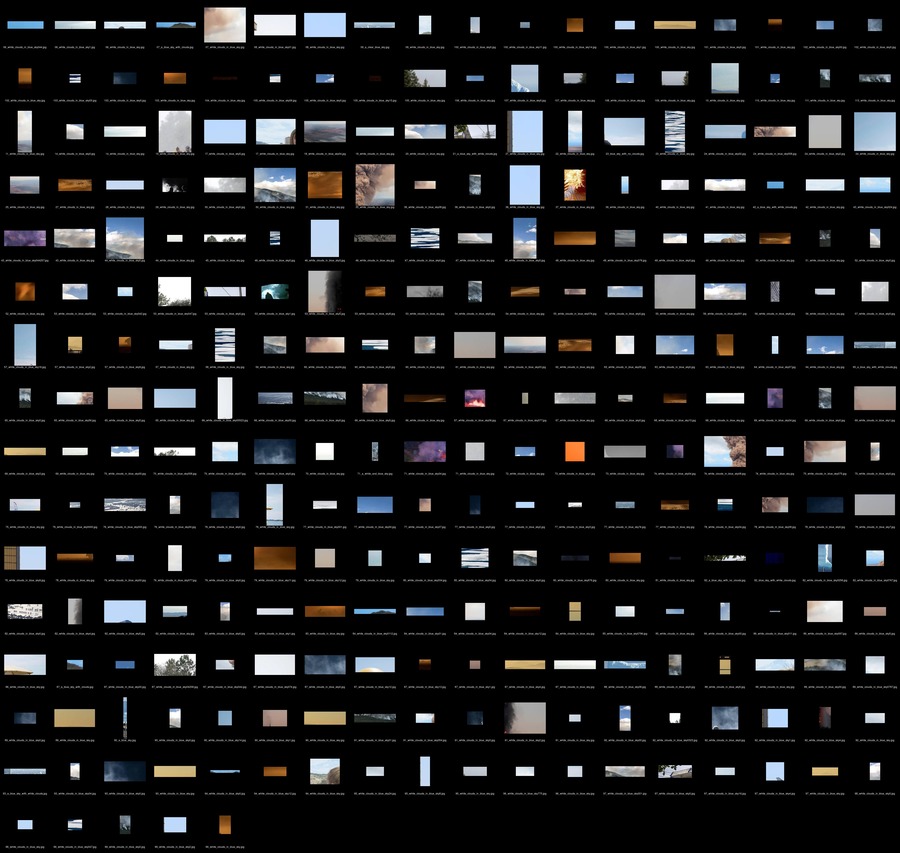

- montage for WCIBS

montage *in*.jpg -tile 5x5 -geometry 1280x720+5+5 -background '#000000' out.jpg

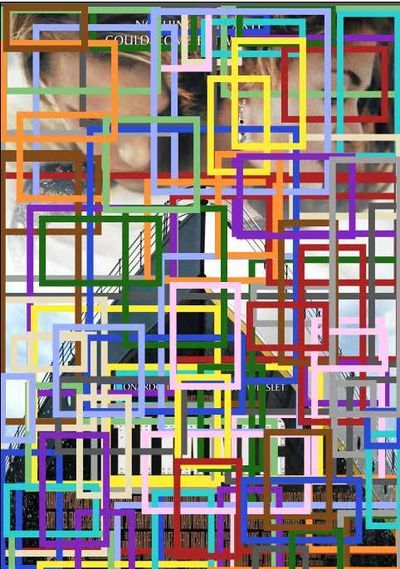

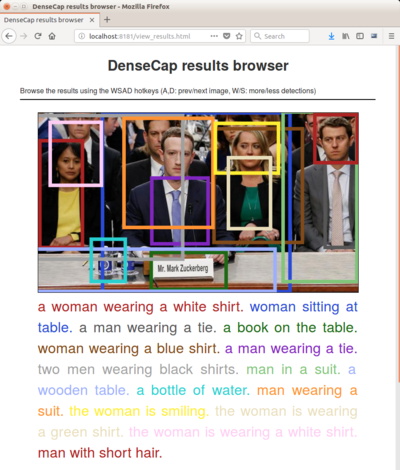

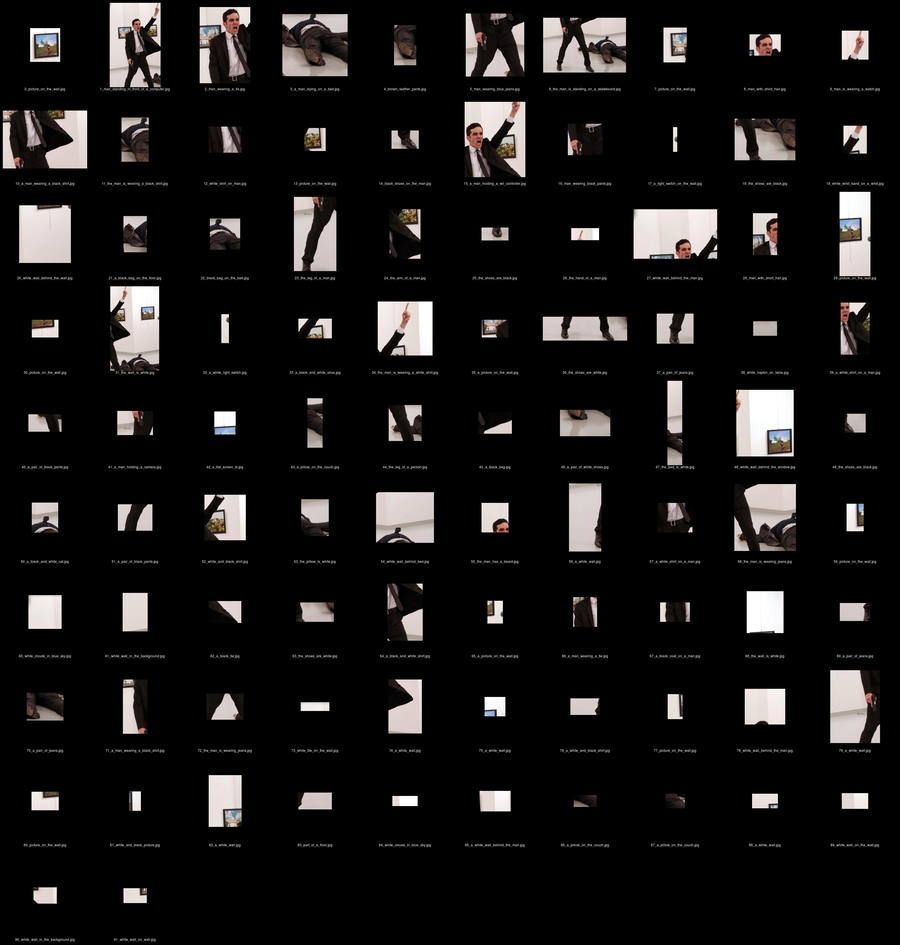

News stories

I took this picture from internet of Mr Zuckerberg during court. (12-04-2018) Every detection box I cut out and put it after eacht other to get an idea how the machine is looking through the image. What will he see first? and does he detect every person on the image? When I saw this picture in the first place, the first thing I saw was that pale, empty face of Zuckerberg. This machine has no focus point and just watch a picture a different way.

DECONSTRUCTING DENSECAP - INTERPRATATIONS OF THE MACHINE

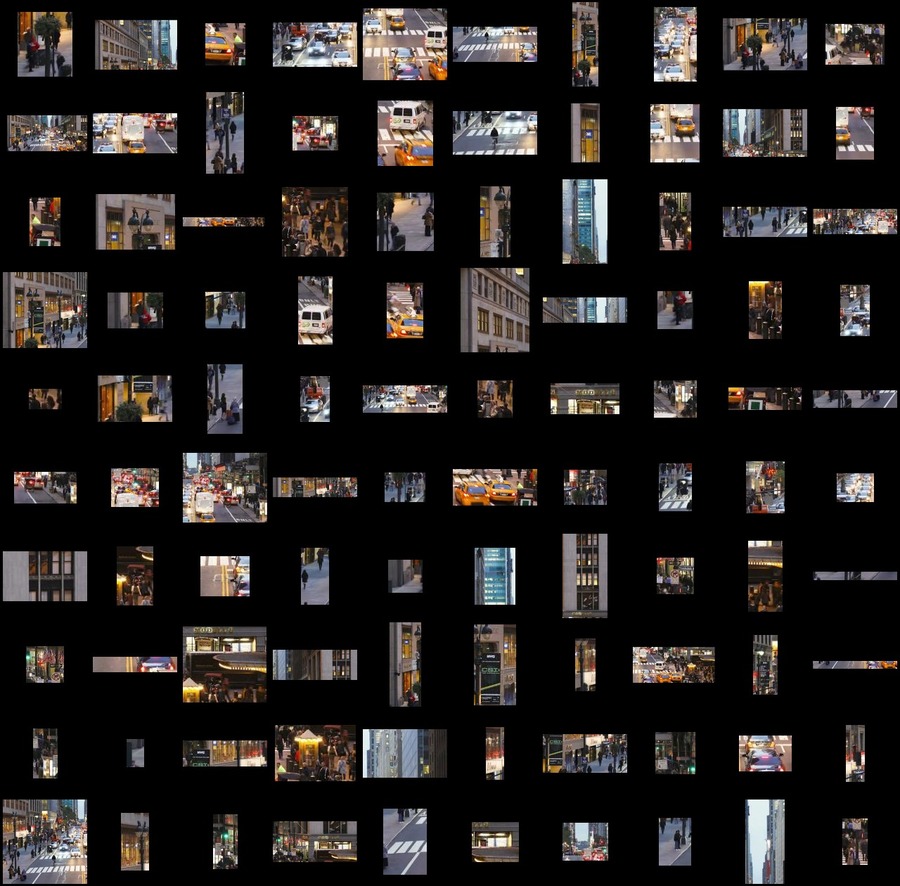

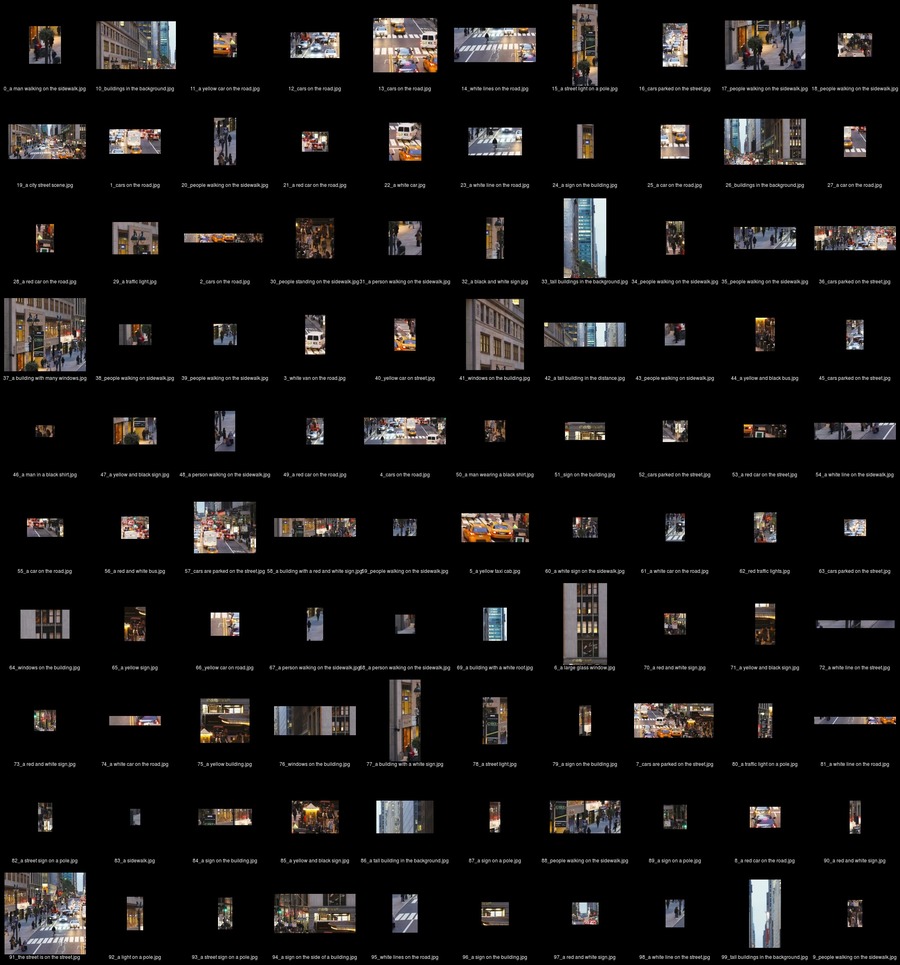

Cropped images

All the detections in order from begin to end

^

|

|

|

|

These are 11 images that started from the first image. Each first recognition I cutted out and put it again in Densecap. It became an endless string of iterations, of a yellow wall/background.

the shirt is yellow

the shirt is yellow

a yellow wall

a yellow wall

a yellow background

a yellow background

the yellow wall behind the cat

the yellow wall behind the cat

the sky is clear

the sky is clear

the sky is clear

the sky is clear

the wall is yellow

the wall is yellow

the wall is yellow

the wall is yellow

the wall is yellow

the wall is yellow

the wall is yellow

the wall is yellow

yellow and white background

yellow and white background

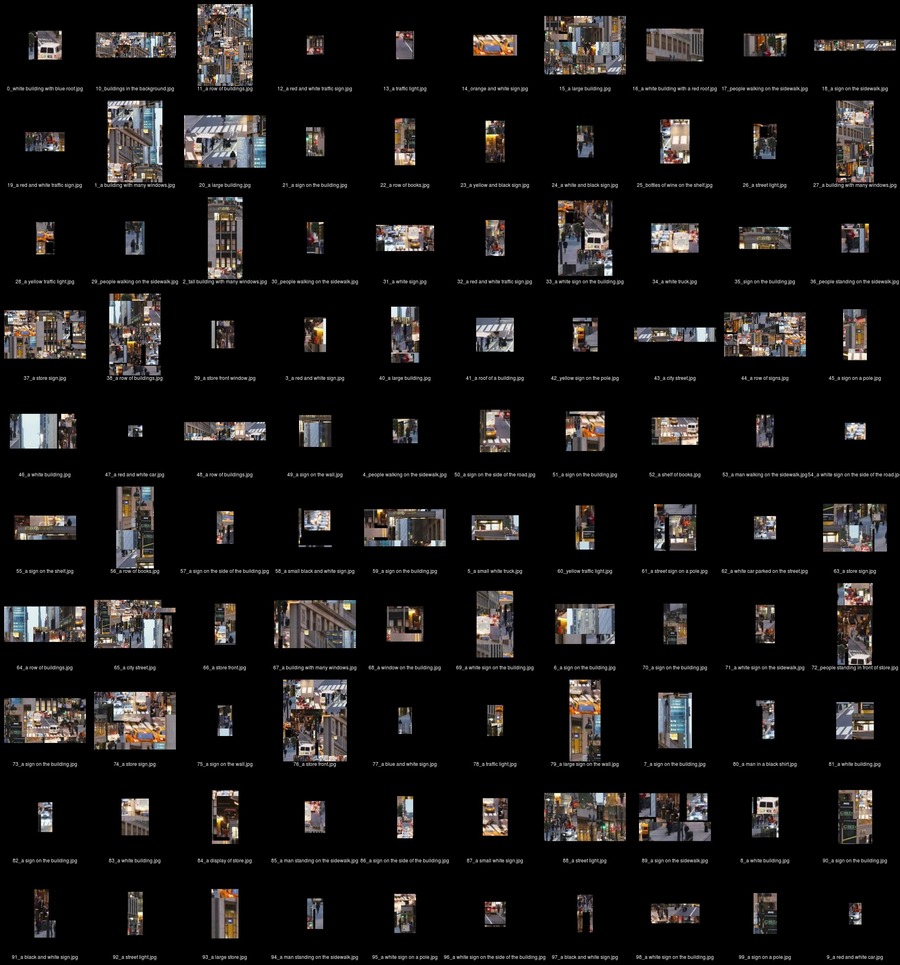

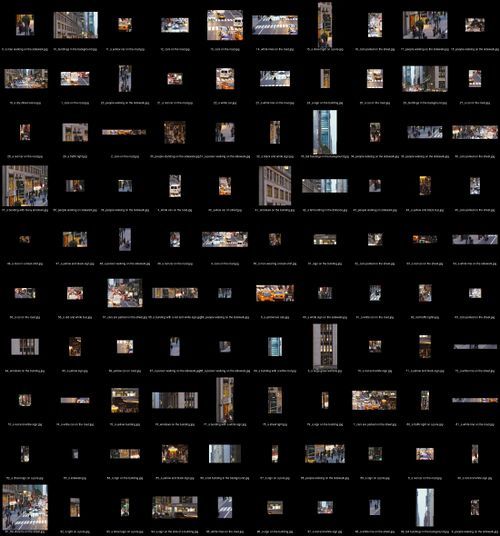

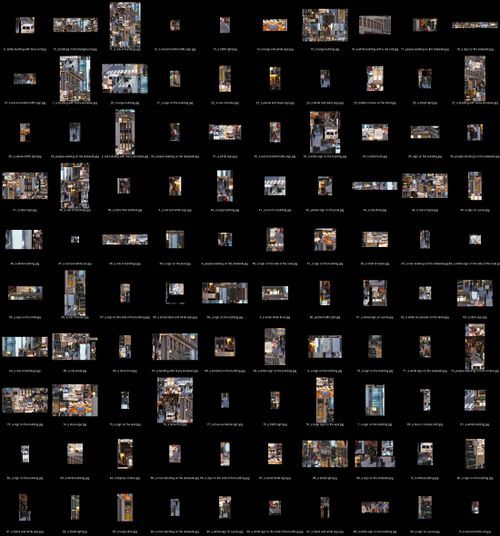

23-04-18 Today I wrote a python script to slice every detected object into a new jpg. So now you can save each object that is detected into a category. This creates a new kind of database which can used by the computer. Maybe I can create new datasets with detected objects. I still have to write a piece of code that can give each cropped jpg the right title. The title should look like this; person_walking_on_the_sidewalk_23-04-18-20-30-34 = <name of object and what is it doing> _ <date> _

import json

from PIL import Image

data = json.load(open('results.json'))

boxes = data["results"][0]["boxes"] # which list do i need to print?

img = Image.open("manhattan.png") # which image im gonna crop?

for i in range(100): # only the first 5 lists

box = boxes[i]

x1 = box[0]

y1 = box[1]

x2 = x1 + box[2]

y2 = y1 + box[3]

print (x1, y1, x2, y2) # from xywh to x1y1x2y2

crop_img = img.crop((x1, y1, x2, y2))

crop_img.save("cropped/img_%d.jpg" % i) #save image

to create this overview of cropped images. go to right folder and say:

$ montage *.jpg -tile 10x10 -background "#000000" montage.jpg

or if you want the name by the pictures use:

montage *.jpg -tile 10x10 -background "#000000" -set label '%f' -fill "#FFFFFF" -geometry '200x200+20+20>' $(ls -1 *.jpg | sort -g) out.jpg

or if you want to create a crazy mashup of all these images, use:

$ montage *.jpg -tile 8x8 -background "#000000" -geometry '200x200+-70+-70>' montage.jpg

fucks up images

convert *.jpg -fuzz 50% -fill 'rgb(0,255,0)' -opaque 'rgb(255,0,255)' -black-threshold 50% output.jpg

"a large mirror","a metal railing","a white metal train","a white ceiling fan","the ceiling is made of metal","light on the ceiling","a white ceiling","a sign on the sidewalk","white tile on wall","the ceiling is white","a metal pole","white tile on the wall","a train platform","a light on the pole","white tile on the floor","light fixture on ceiling","the sink is white","a white ceiling fan","light on the ceiling","a large white plane","white ceiling fan","a white train","white ceiling ceiling","a metal pole","a metal pole","a light pole","white tile on wall","light on the wall","a white ceiling","a floor in the floor","white tile on the wall","white ceiling lights","white tile on wall","white tile on wall","white light on the ceiling","white light on the ceiling","a metal fence","white clouds in blue sky","a metal pole","white tile on the wall","the floor is made of wood","white tile on the wall","white tile on wall","white ceiling in the ceiling","the wall is made of metal","white tile on wall","a metal pole","person walking on sidewalk","white cabinets on the wall","white tile on the wall","a metal pole","white tile on wall","a white ceiling","the ceiling is white","a brown tile floor","white tile on the wall","the floor is tiled","white tile on wall","white tile on the wall","white ceiling on ceiling","a white metal pole","a brown floor","white clouds in blue sky","a yellow line on the floor","a ceiling light","the floor is made of wood","the floor is made of wood","white ceiling in the ceiling","a white line in the sky","white ceiling in the ceiling","a concrete sidewalk","the fence is white","white tile on the wall","a tile on the floor","a floor in the bathroom","part of a floor","the floor is tiled","white tile on the wall","part of a floor","the floor is made of wood","a white tile floor","part of a floor","the floor is made of wood","white tile on the floor"

This code can crop every single images from a result.json file. This makes it possible to crop a full movie in to pieces

import json

from PIL import Image

import os

data = json.load(open('results.json'))

#boxes = data["results"][0]["boxes"] # which list do i need to print?

#img = Image.open("kim.jpg") # which image im gonna crop?

#img = Image.open ["results"]["img_name"]

#img_names = data["results"][0]["img_name"]

num_images = len(data["results"])

#print(img_names)

#caption = data["results"][0]["captions"]

#print(len(caption))

#First loop for the images

for i in range(num_images): # only the first 5 lists

img_name = data["results"][i]["img_name"]

#load the image

img = Image.open(img_name)

captions = data["results"][i]["captions"]

boxes = data["results"][i]["boxes"]

dir_name=img_name[0:-4]

os.system("mkdir "+dir_name)

#we go through all the captions and boxes fot that image

number_captions_boxes = len(boxes)

for j in range(number_captions_boxes):

box = boxes[j]

x1 = box[0]

y1 = box[1]

w = box[2]

h = box[3]

x2 = x1 + w

y2 = y1 + h

#print (x1, y1, x2, y2) # from xywh to x1y1x2y2

crop_img = img.crop((x1, y1, x2, y2))

name = captions[j]

name_no_space = name.replace(" ", "_")

file_name=""

if j < 10:

file_name=dir_name+"/0"+str(j)+"_"+name_no_space+".jpg"

else:

file_name=dir_name+"/"+str(j)+"_"+name_no_space+".jpg"

try:

crop_img.save(file_name) #save image

except:

print("Could not crop:"+ file_name);

#crop_img.save("cropped/img_%d.jpg" % i) #save image

Fairy Tales

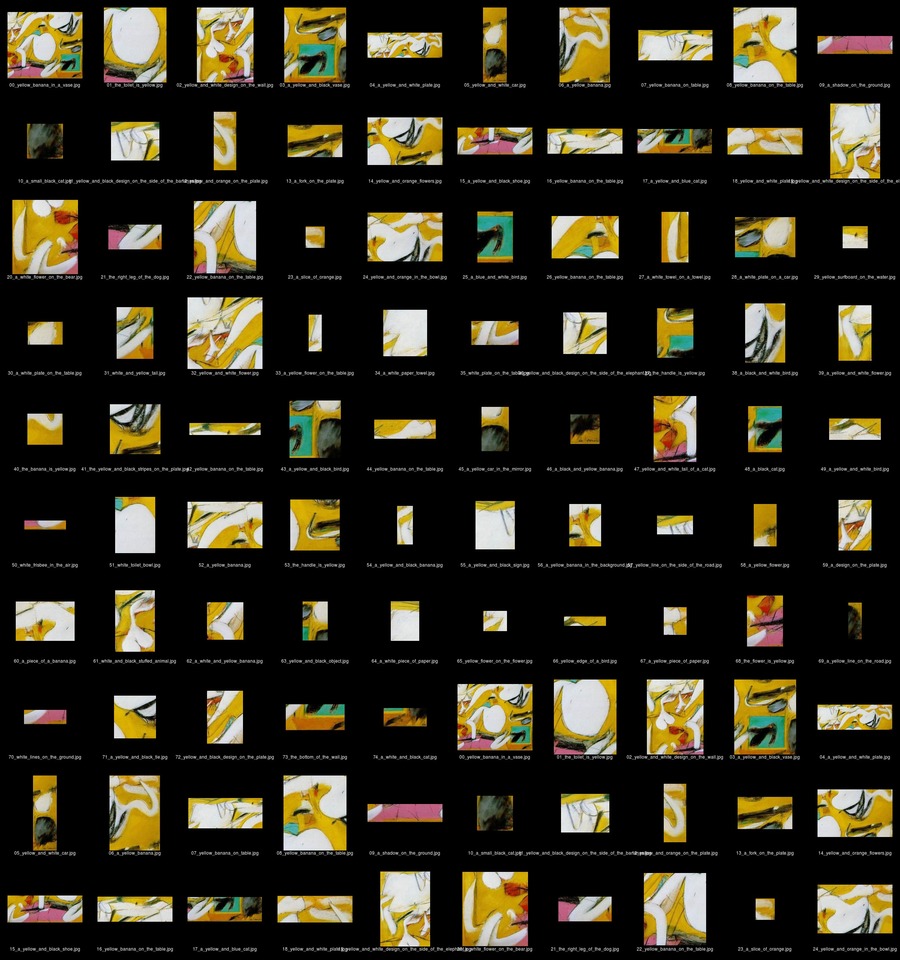

Art

visible thinking routine

The way humans looking at things is a way to give meaning to an image. A computer watches way different then a human. Computers don't see any relationships in images.

espeak "yellow banana in a vase the toilet is yellow yellow and white design on the wall a yellow and black vase a yellow and white plate yellow and white car a yellow banana yellow banana on table yellow banana on the table a shadow on the ground a small black cat yellow and black design on the side of the bananas yellow and orange on the plate a fork on the plate yellow and orange flowers a yellow and black shoe yellow banana on the table a yellow and blue cat yellow and white plate yellow and white design on the side of the elephant a white flower on the bear the right leg of the dog yellow banana on the table a slice of orange yellow and orange in the bowl a blue and white bird yellow banana on the table a white towel on a towel a white plate on a car yellow surfboard on the water a white plate on the table white and yellow tail yellow and white flower a yellow flower on the table a white paper towel white plate on the table yellow and black design on the side of the elephant the handle is yellow a black and white bird a yellow and white flower the banana is yellow the yellow and black stripes on the plate yellow banana on the table a yellow and black bird yellow banana on the table a yellow car in the mirror a black and yellow banana yellow and white tail of a cat a black cat a yellow and white bird white frisbee in the air white toilet bowl a yellow banana the handle is yellow a yellow and black banana a yellow and black sign a yellow banana in the background yellow line on the side of the road a yellow flower a design on the plate a piece of a banana white and black stuffed animal a white and yellow banana yellow and black object a white piece of paper yellow flower on the flower yellow edge of a bird a yellow piece of paper the flower is yellow a yellow line on the road white lines on the ground a yellow and black tie yellow and black design on the plate the bottom of the wall a white and black cat" -w wav.wav

Nature

Live Footage

Mask R-CNN

Balloon

Mask R-CNN is open source software similair to darknet YOLO. It's slower and has less classes in its dataset. But it can detect waaaay more in detail. It recognize for example a person, but it also detects all the pixels that belong to the person. So that's why it called Mask R-CNN. This way it can cut out movement, isolate it, duplicate it, enz..

I first tried the demo in the software and it worked! This demo only can detect balloons, mask them and put everything that's not a balloon in grayscale. I'm not sure if this is gonna be the software that I'm gonna use, but I like it a lot

source img

output img

Insights from Experimentation

import json

from PIL import Image

import os

import subprocess

data = json.load(open('results.json'))

num_images = len(data["results"])

for i in range(num_images): # only the first 5 lists

img_name = data["results"][i]["img_name"]

#load the image

#img = Image.open(img_name)

captions = data["results"][i]["captions"]

dir_name=img_name[0:-4]

os.system("mkdir captions")

for j in range(len(captions)): # only the first 5 lists

caption = data["results"][i]["captions"][j]

caption_no_space = caption.replace(" ", "_")

command = "convert -size 800x -gravity Center -pointsize 72 caption:'"+caption+"' -transparent white captions/"+caption_no_space+".png"

print(command+ "\n")

subprocess.call (command, shell=True)

#sboxes = data["results"][i]["boxes"]

#sdir_name=img_name[0:-4]

#str1 = ''.join(captions)

command = "convert -size 800x100 -gravity Center -pointsize 72 caption:'"+caption+"' -transparent white captions/"+caption_no_space+".png"

Artistic/Design Principles

Artistic/Design Proposal

Realised work

Final Conclusions

Research DOC

Assignment or self formulated project description

As a multidisciplinary designer I see this as a opportunity to create a work that relies on a contemporary theme, computer vision. It’s a popular and important theme but also a theme that feels brand new and ready to discover new ways of seeing.

Central question

How perceive and interpret computer intelligence images?

Subquestions

What's the difference between seeing with human eyes, and seeing with computer eyes?

> this question is about the fact that the computer can't read emotions in certain images. For example 9/11 twin towers event. We as human all know what a terrible event it was. But the way of seeing with a computer can't see the emotional impact of the image.

How can I use computer intelligence as co-operator of a new narrative?

> The way how a computer detects certain objects in images is very weird sometimes. We as humans can't see it, but this software does. How can I use this as a strength to create a story that has co-authorship by me & A.I?

How can a computer see new forms/interpretations in abstract artworks?

> When we looking at art, we can have our own ways of seeing. The computer has this power as well. He can crop particles in those abstract paintings and give new meaning to those certain particles. This creates new meaning and ways of seeing.

How observes computer intelligence the real world?

>While thinking about how the computer interprets, it is also possible to let the computer perceive in real-time. But when does this gets interesting? When can you say something about it?

Set up of my research

My research is as well visual as theoretical. All my collected experiments, thoughts, code-snippets and related articles together on one wikipage. So I can work on different computers on the same project.

0 - orientation

Here I orientate myself with computer vision. What is it exactly? What kind of computer vision is out there? And what do I think about computer vision? What’s in there that I like so much?

1 - short experiments

I googled and read a lot about image recognition. This technique is not very new, but since a few years it’s getting pretty accurate and that’s because of the huge image supply. For every object there’s thousands of image on the internet. Even the smallest screw you can find a image for it. This makes it possible to create really good object recognition predictions. These predictions are based on databased people created themselves. So there are restrictions to it, until you create your own datasets

Here I will mention artists, blogs, writers, makers and things that will match the topic

3 - My position as designer combined with computer intelligence

4 - subquestions

moment of making a choice. I have 3 elaboration possibilities. And that has to do with the 3 subquestions I mentioned before:

What's the difference between seeing with human eyes, and seeing with computer eyes?

> this question is about the fact that the computer can't read emotions in certain images. For example 9/11 twin towers event. We as human all know what a terrible event it was, with a thousands deaths. But the way of seeing with a computer can't see the emotional impact of the image. From that moment it's getting interesting in what the computer sees. It's not trained on the images we see as humans everyday. Terrible happenings or leaked images are for us shocking, but the computer will never see the shocking aspects of it just as we do. The way to work this out is to find a nice blog/twitter/facebook page with continuously new 'emotional' content to have as input for the machine. In this way I can show how the computer sees and how non-emotional he can be during watching these images. I can show this through imagery and audio (let the computer tell us what he sees)

Can I use the way computer intelligence see to form a new narrative?

> The way how a computer detects certain objects in images is very weird sometimes. We as humans can't see it, but this software does. How can I use this as a strength to create a story that is created by me & A.I? I can use a graphic novel as medium and create my own story with my own knowledge of storytelling and use the generated content of A.I to create the graphic novel

How can a computer see new forms in abstract paintings?

> When we looking at art, we can have our own ways of seeing. The computer has this power as well. He can crop particles in those abstract paintings and give new meaning to a painting. This creates new meaning in paintings.

5 - elaborations

6 - technical research

Here I will mention and link to all my technical sources. This is a list and codes-snippets I use to automate and develop a workplace for myself to test if the output is interesting. Those technical resources are all open-source and free to use. So when combining and tweak different software makes it my own. Ofcourse I will mention every source I use to get a full overview how I worked.

Set up of your practice project

This goes along with my research. The research is in fact also the practice project because during making and learning new techniques I see possible outcomes.

1 - The chosen sub question

This sub question will lead me to a end result. Ofcourse I thought already about a outcome. I probably will need a beamer or big screen to show end results. Or it becomes a kind of experimentation room where you can see all my output images.

Role of your external partner

I have contact with to possible external parters, first one is Florian Cramer, a researcher who’s working around the field of arts and technology. And the second one could be RNDR studio. I contacted them to talk about my project and they were curious what this could become. They also make experiments with computer vision but don’t use it yet in projects Their role in my project is a discussing partner to check if this has the potential to have meaning in this world. It also to check if it is understandable for the public.

Economic aspects

Station you choose to work in

interaction station