UNRVL9-Project1

Tuesday 13/10/2020

References

— https://en.wikipedia.org/wiki/Jeremiah_Denton

— https://learn.adafruit.com/chatty-light-up-cpx-mask

— https://learn.adafruit.com/anatomical-3d-printed-beating-heart-with-makecode

— https://learn.adafruit.com/sound-activated-shark-mask

Alternative Greetings

We talked about the scale of greetings from intimate to formal and how to represent that. Greetings can be awkward if you don't have the same expectations. A conversation about the greeting habits of your new lovers family can be useful to avoid very awkward semi-hand-shake-kiss-hug-situations.

How will greetings evolve after Corona? Will they fade? Will we greet more extreme because we missed it so much? Hugging as much as possible to compensate the previous touchless times. Or maybe we'll act more aggressively and head bump instead of kiss.

ZOOM GREETINGS

Wiping the camera to show respect. How many times you wipe it, what you wipe it with indicate levels of respect. You can customize your wipe pattern like you can a secret handshake with a friend.

Bowing your camera indicates respect because you can see if someone took the effort to put on pants to meet you.

PERSONAL GREETINGS

Using the eyes and eyebrows because you can't touch others or see their mouth. Eyebrow raise could indicate a smile or a hello, the amount of times raised indicate the scale from intimate to formal.

The ultimate sign of respect could be to close eyes and raise eyebrows to show you really trust someone, like for a grandparent.

involving the CIRCUIT PLAYGROUND

How can we convert a scale of intimacy while greeting another person by using color, speed, pattern, tonal value of sound?

The digital version of our wipe-your-camera-idea would be wiping the circuit instead of your camera. It would light up in more warm or cold tones to visualize a different level of intimacy. A blue wave would be a formal greeting, a handshake. A red wave would be a tight hug.

Questions

How can we show non-verbal types of conversation without being present?

- 1. What is beyond the frame? Can we experiment with the viewport of our camera?

- You only see one square cut out of someone's environment when you're zooming. A ::glimpse of your surroundings are shown when you are switching places. This is nice.

- We can use a servo motor? Processing?

- 2. What is the energy in the room?

- 3. How can we get a better sense of spacial location?

- 4. What is the output?

- 5. Can we transfer sight into something more abstract?

Wednesday 14/10/2020

References

— https://www.pretotyping.org/

— https://lauren-mccarthy.com/us

Thoughts

Wednesday we talked about the different types of greeting then we eventually transitioned to talking about body language and how you can't read body language through a video screen. We had decided to use motion detection under the table to show the movement. That data would relate to emotions, like shaking your foot violently indicates impatience and other things along that line. These movements would be translated into colors varying from warm to cool colors depending on what the movement indicated. We would also use processing to create a shape that would change from soft to sharp depending on the mood the sensor is conveying.

As an experiment we filmed parts of ourselves that is not visible during a Zoom call.

Question

How are we going to detect body language?

- 1. Different wearables attached to different body parts (fout, hand, head?) can give us input.

Thursday 15/10/2020

References and sources

— https://en.wikipedia.org/wiki/Synesthesia

— http://soundbible.com/tags-water.html

Updating our concept

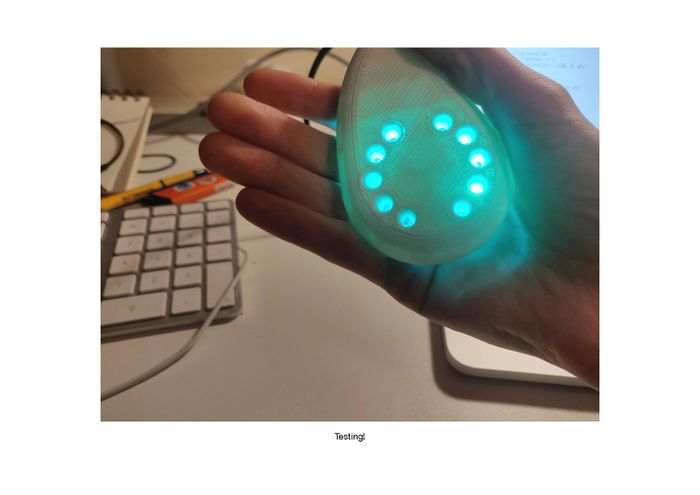

We specified our idea. Our concept will focus on the level of attention in a context of a meeting. It will mainly be useful for a meeting where there is one person speaking and several others listening. The tool we're making is helping the speaker feeling more confident about presenting by making it possible to detect body language of interested listeners. It helps you noticing if one is fading away during a presentation. For listeners it's a tool helping you being aware of your own interest during a reading or workshop.

Work Marathon

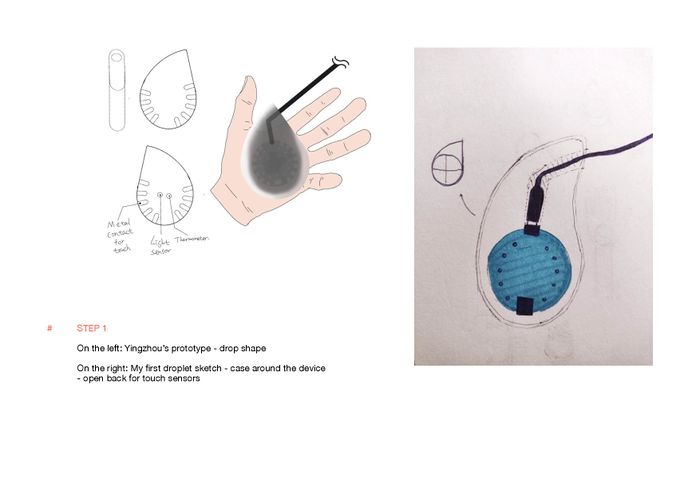

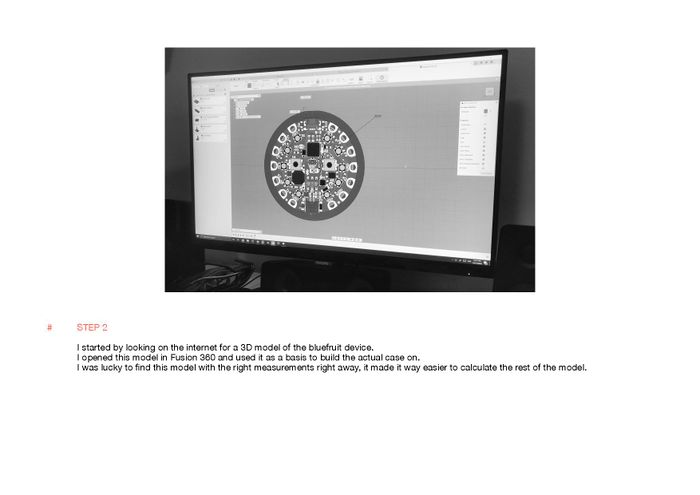

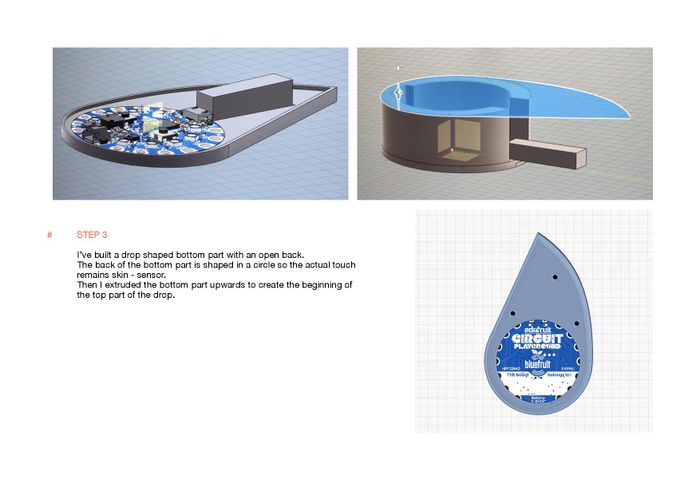

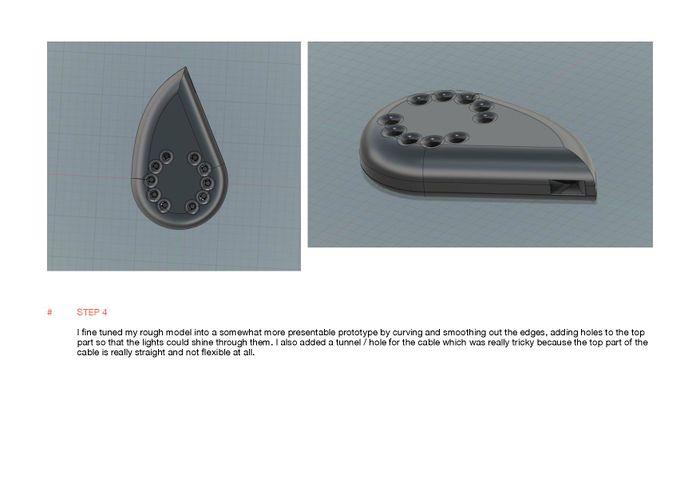

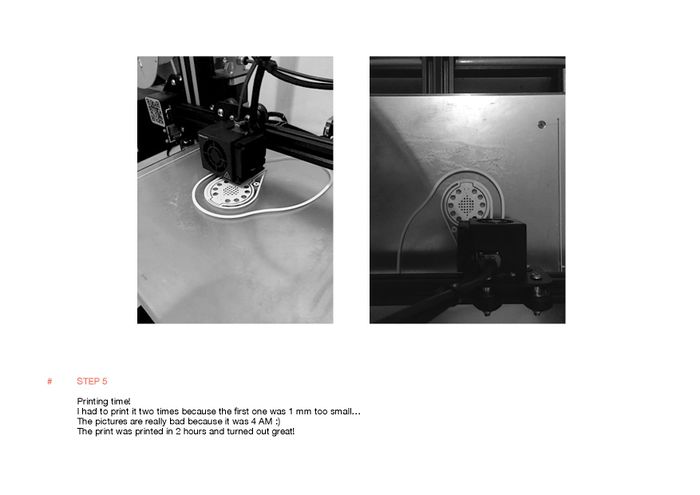

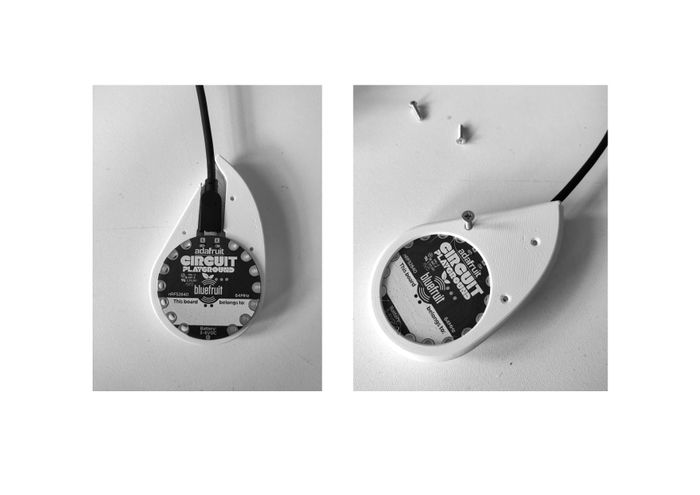

The Drop (3D-model)

Anna Beirinckx & Yingzhou Chen

Coding

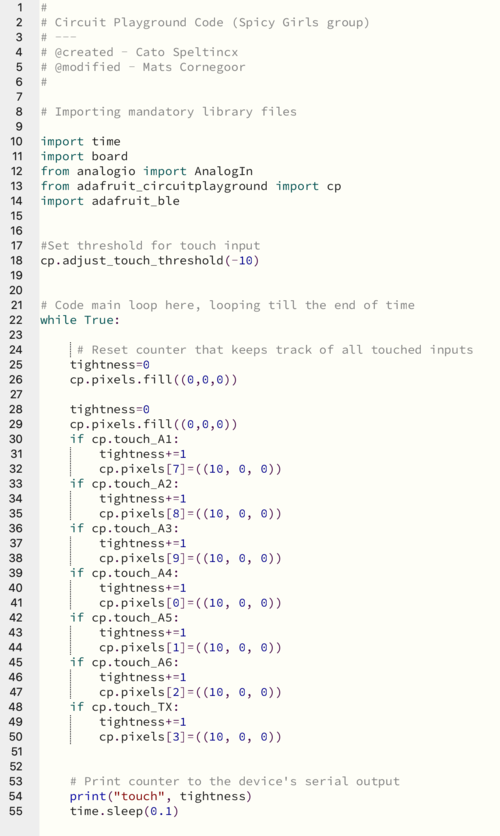

Mats Cornegoor & Cato Speltincx (Python)

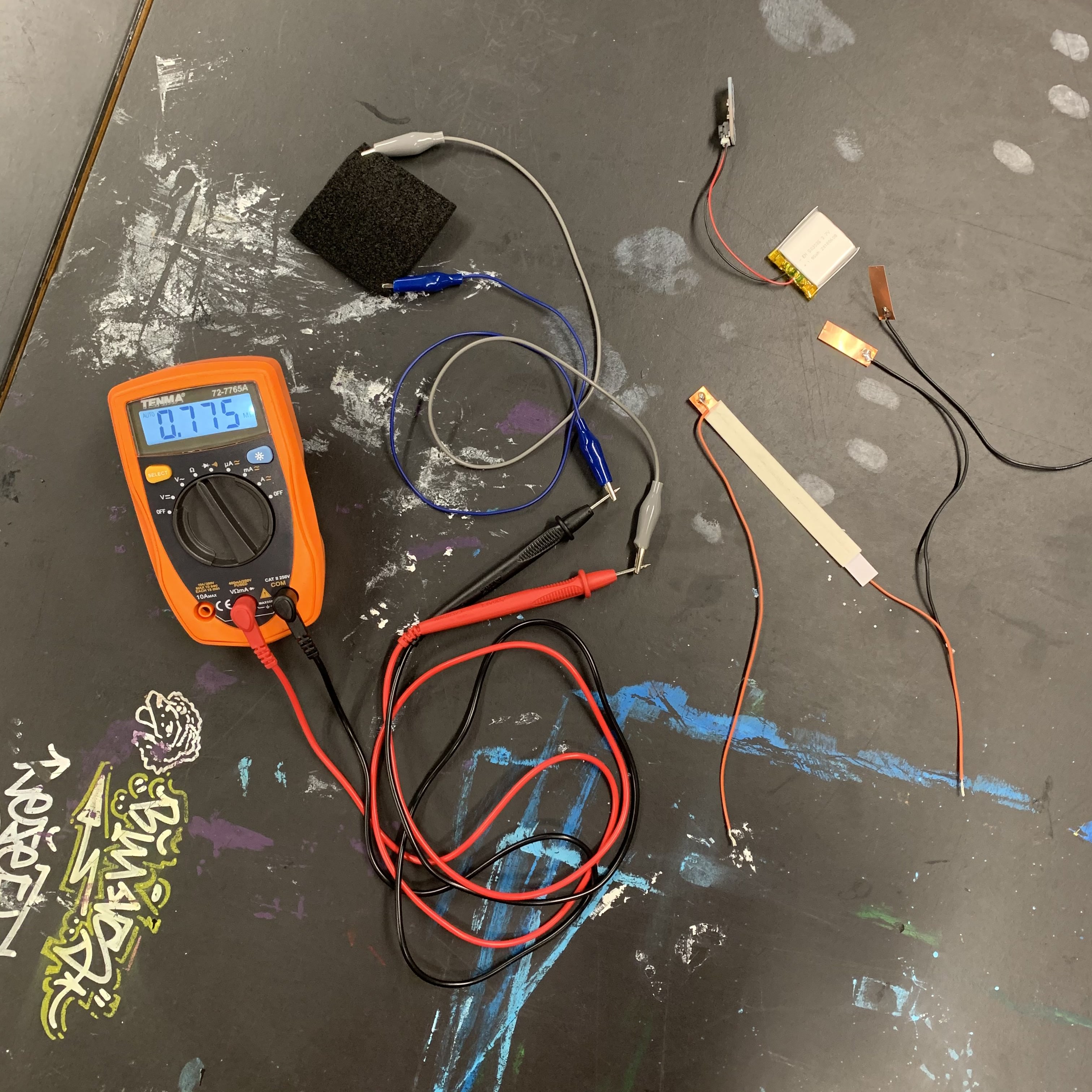

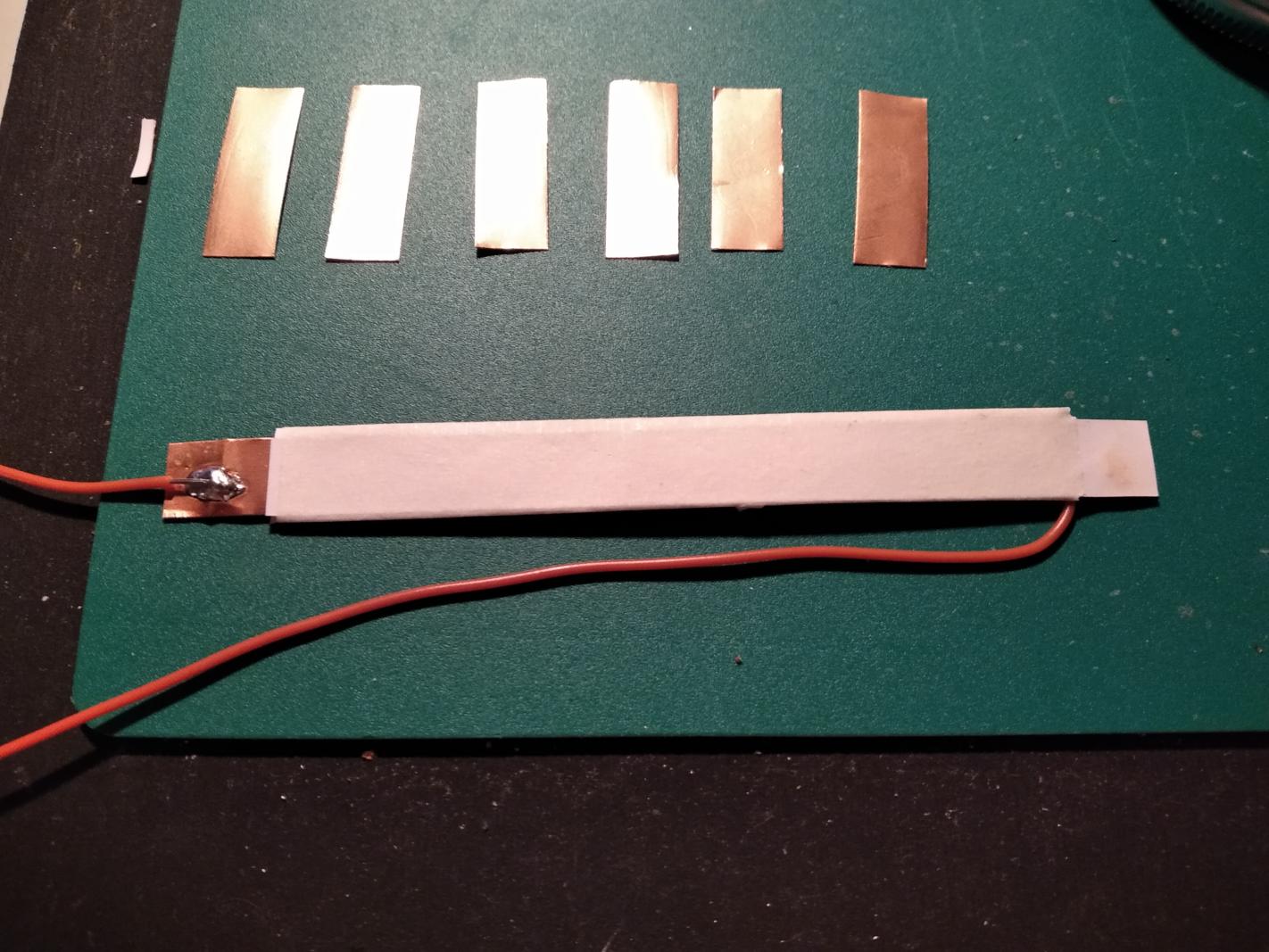

For the technical interactive part of the project we tinkered around with different types of sensors (apart from the touch sensors that are already on the Circuit Playground board). We wanted to try to find out whether the intensity of the grip could also be detected by some form of a pressure sensor. Our range of sensor materials consisted of "resistive foam" and adhesive copper. By applying pressure to either the resistive foam and the copper-paper pressure sensor, the resistive value changes and gives a corresponding input value.

For the technical interactive part of the project we tinkered around with different types of sensors (apart from the touch sensors that are already on the Circuit Playground board). We wanted to try to find out whether the intensity of the grip could also be detected by some form of a pressure sensor. Our range of sensor materials consisted of "resistive foam" and adhesive copper. By applying pressure to either the resistive foam and the copper-paper pressure sensor, the resistive value changes and gives a corresponding input value.

During prototyping, Cato found out that only the capacitive touch sensor would provide enough data because of the sensitivity of the touch inputs. The pads have to be held with normal proper force in order to register a positive value, opposed to having to apply an unreasonable amount of force to these resistive materials that we tinkered with.

The code on the Circuit Playground communicated to Processing through USB serial. It detects the grip of the user on the device by counting how many points are being touched with a proper grip. This numeric value is then visually translated by Processing into a level of attention, by altering the height of the wave.

Visualisation

Yingzhou Chen, Soo Seng & Ana Tobin