User:Emielgilijamse

Digital Craft: Practice Q9 "How to be human"

Contents

- 1 Project 1 "On the body"

- 2 Project 2 "Sensitivity Training"

- 3 Project 3 "Mind (of) the machine"

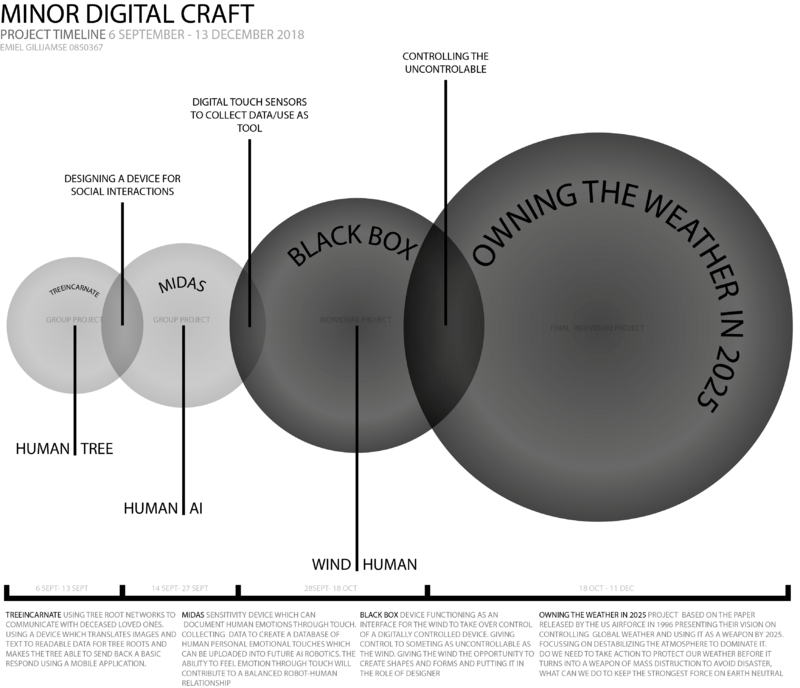

- 4 Digital Craft: Minor Cybernetics

- 5 Project 2 _ Cybernetic Prosthetics

- 6 Project 3_ Wind Wacom

- 7 Digital Craft Essay My Craft

- 8 Visual Mapping

- 9 Timeline Minor Projects

- 10 Final Research Document

- 11 Project 3

Project 1 "On the body"

Exploring bespoke technologies as remediation, adaptions or extensions of the human body

Introduction

'What I wanted to achieve was to find a relevant example of a traditional technique using a modeling methode as used in modeling software.'

At this point I am especially interested in the "craft" in "digital craft'. I decided to interpret the human body as a toolkit. Focusing on the finger, which is giving us the ability to create forms and shape objects in traditional crafts. Looking back in history, the hand and its finger were one of the first and most essential tools used by man to shape and form. Especially looking at ceramics and sculptures, in which our hands and fingers are still essential. But in our digital age we've defined different ways of creating, inspired by our manual methods, but different in possibilties and approach.

Where the traditional ways of shaping materials like clay, direct contact with the material is inevitable, the modern modeling process takes place in a digital enviroment. Software like Meshmixer and Sculptris gives you the possibilty to pull and push an unlimited amount of mass, giving you endless possibilties, which are practically impossible using the traditional ways of shaping by hand. I wanted to design and create an extension of the human finger. Combining traditional ways of shaping, inspired by the modern approach of modeling as seen in free-form modeling software.

Research

Using technology to alter human capabilties.

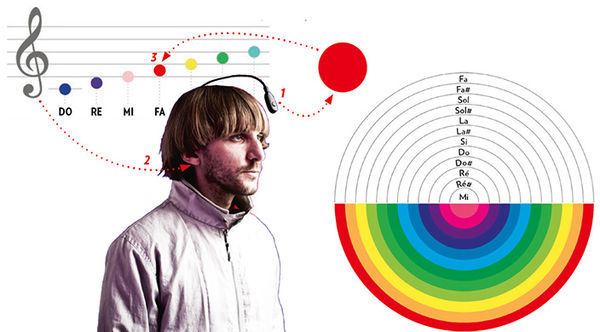

After reading the article 'How Humans Are Shaping Our Own Evolution' (by D.T. Max, National Geography, issue april 2017), I became acquianted with the existence of Neil Harbisson. He's is known as one of the most famous living human cyborgs of our time. He has a rare condition called achromatopsia; he cannot perceive color. Together with science he now is using a device attached to the back of his head. Not by sight but through vibrations on the back of his skull he can indentify colors. Using technology not only gave him the abilty to indentify his surroundings, he gained an extra sence in a way normal human beings can not. He is able to do something others can not, because of technology.

This example of Neil Harbisson is an interesting way of showing the value of using technology to extent our possibilities. To add devices to our body making us do things we could not do before. The focus for this project will be on extending the human ability to shape form using the finger as a tool.

The way Harbisson is helped by technology is a rather extreme example. We can use technology, low- or hightech, to increase our capabilities. I want to design and make a device which can be added and removed from the human body. The device will only serve one single purpose. Focussing on the human finger as a tool, I will use simple technology to change the way of using it. Can I mimic the way of shaping in a digital enviroment, to make a combination between a new way of shaping with a traditional craft?

To find a way to make the project physical, I needed relevant examples. Inspired by the methode designer Jolan van der Wiel is using to shape his collection "Gravity"-stools, I decided to try this technique myself.

Getting my hands on a magnet was not really the challenge. Finding the right material to shape it with one, took some effort.

I started with metal sawdust and woodglue as binder. The first results were promising but I was unable to keep the shape after removing the magnet. I needed a more firm base material, like clay to get a better result. I found a non-drying subsitute of clay, plasticine, which I found interesting to work with. Because of it's characteristics, chalk and petroleum, I could create a firm base and have the ability to change its'density using pertoleum based fluids (lampoil). The more dense variant could be moulded in a basic form to shape, the more fluid one could be pulled up from a flat surface.

Result

I was hoping I would be able to shape more freely with the substance I have had created. Pull and shape the material in a new way. Although searching for more form freedom, it turned out to be very limited. it did not matter what the density of the substance was, the maximum amound of material that could be pulled out, was around 10 mm. Not enough for what I had in mind.

Only then I realized I was focussing on the substance more than the object itself. I had to turn this around and leave the substance for what it is. A shame for sure, but if I wanted to engineer it into something useful, I would need more time. So I decided to created three different versions of the original tool, with different strenghts. I wanted to change a few things on the object. The design would stay practically the same, but I wanted to involve the user himself. Let the user decide what the function is, what you can use it for. But to encourage the random user to put on the tool and play around with it, it needs to be visually attractive, more like jewellery. That's why I decided to use a coating with draws attention en curiosity. So the user would be triggered to experiment and decide what the most relevant function of this tool is. Which could be decoration, moving and attracting certain materials or a tool to increase attention, experimentation and inquisitivity.

Project 2 "Sensitivity Training"

Understanding and learning to work with sensors

Teammates: Sjoerd and Pepijn

Introduction

After a brief introduction about a selection of common used sensors, we had to choose one for this project. We chose the Heat-sensor. Our task was to connect sensors with the human perception, make a combination between man and machine. This project could basically be devided in two; a movie and an object. These two parts needed to be connected in some way. We almost immediately decided to use a common heat sensor, a device which gives us the temperature in numbers. And use the human heat sensor, triggered by feeling and pain stimuli.

Research

Film

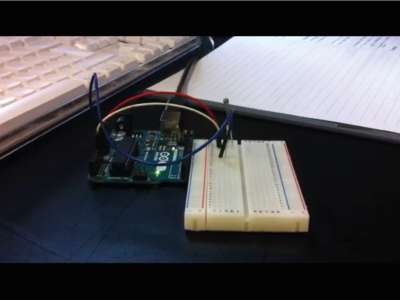

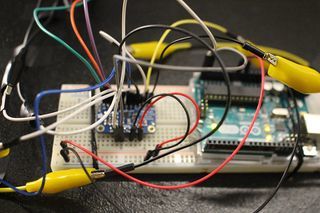

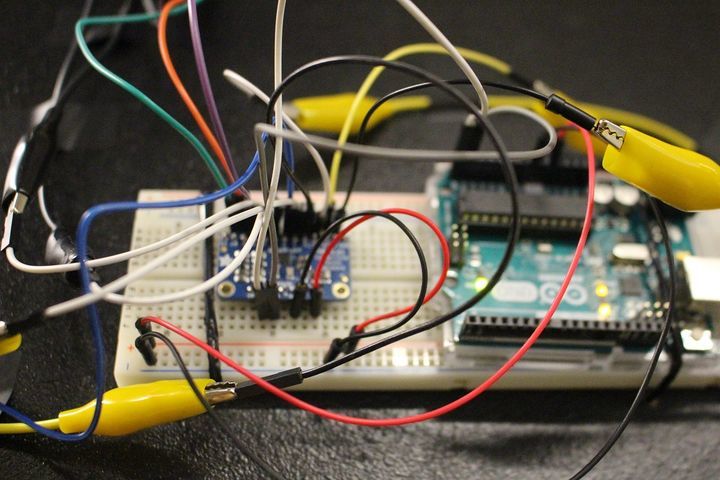

To get a full understanding of how the sensor device that we wanted to use works, we decided to make our own setup. For the film we were using the Ardiuno UNO and heat sensor to measure the temperature. The setup can be seen below.

We wanted to make three different films, all lasting 10 seconds. These short films give the viewer information about the temperature in different ways. The first gives values in numbers in three different catagories. A random value, temperature in degree celsius and degree fahrenheit. Using a scale from -15 till 68 degrees celsius. Below -15 and above 68 degree celsius the human cell structure falls apart. Giving us fluctuating numbers representing the three chosen catagories. These values are mixed together randomly so they would be hard to define what the values mean.

(film01) http://digitalcraft.wdka.nl/wiki/User:Sjoerdlegue#Video_1

The second movie would provide more information about how we measured the temperature and what the values in the previous movie meant. We used the setup as shown above, but added an other feature. We decided a different visual reference would be interesting. We connected the values given by the sensor to a screen which translated these into greyvalues. We used the same scale as for the numbers, only this time we needed the colour codes aswell so combining it with RGB colour values. So our -15 degree celsius was set on 0, and the 68 degree celsius on 255. With the information we recieved, we were able to let the greyvalue change with the temperature given by the heatsensor.

(film02) http://digitalcraft.wdka.nl/wiki/User:Sjoerdlegue#Video_2

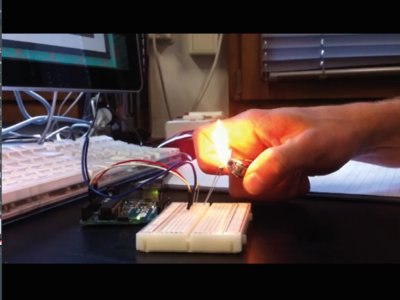

The final film needed interaction with the human senses. We used the same setup as with the second film, only this time we wanted to combine the digital sensor with the human sensor. We wanted to use the flame to see how long it would take untill the human sensor would tell the body to pull the hand from the flame. Using the changing colours to see if they could effect the human sensor. To see if with a temperature of let's say, 50 degree celsius looking at a white background, would 'feel' different then looking at a dark grey background.

(film03) http://digitalcraft.wdka.nl/wiki/User:Sjoerdlegue#Video_3

We were confindent we had an interesting idea for making an object inspired by the film. But soon we discovered there was room for improvement. Our final film, which we thought had the most potential for the second part of the project, seemed to be a bit to cruel. Using pain in a project raises a lot of questions. Why and how and more important, what we wanted to achieve, were to vague. It also seemed our second film was the strongest one. So this film became the base of our object/installation.

Installation

Our search for an interesting second part of this project, started withthe idea to make our own infra-red camera. We hacked an old webcam, following a DIY on youtube. Not long after that we discovered the result was not interesting enough although we had it in a wokring condition. The setup we had can be seen below.

For the installation we went looking for an other kind of heatsensor, one we could shape ourselves. We came across thermal filament for the 3d-printer which reacts on heatsources like bodyheat and sunlight. Before thinking about the shape we wanted to use, we started by experimenting with the material itself.

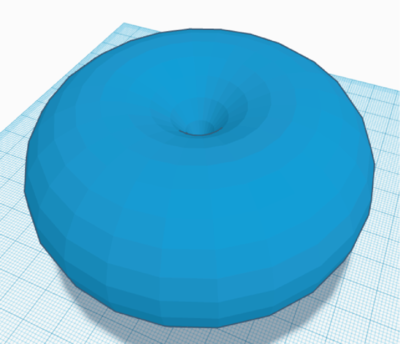

During the experiments we tried to find the best way of using this material for our object. The fact that the material becomes slightly translucent could be used to make image or text appear and dissapear. After trying some samples and showing them to others, showed that the material itself is already interesting. We found out that people were curious of what the material would do by heating it using your hands. We wanted to keep the mysterious property of this material more or less secret. We wanted to invite the user to touch the object and see what would happen. What we needed to encourage people to hold the object, was an attractive shape. Both strange and alien as natural and friendly. The shape of the magnetic energy field that surrounds us humans beings, has the shape of a torus. This "fat donut" appeared to be an interesting shape for our object.

The printed object had to be placed on some sort of tripod to make it easier for people to touch. The whole installation should invite people to participate and interact with the object. That is also the reason why we chose to add a poster which gives visual information about the project. The title 'energy flows were the attention goes' should give the user comfort in touching the object and figure out what it does. This installation basically registers the influence of your body heat for a short amount of time, it is functioning as a heat sensor.

Result

Project 3 "Mind (of) the machine"

Reconstruct images using the pixel enhancer algorithm

Teammate: Noemi Biró

Introduction

Boris Smeenk and Arthur Boer used an algorithm to reconstruct images taken from a data sheet collected from different sources on the internet. This algorithm processes a large amount of images, tries to understand the shapes and colours. It wants to create new shapes based on the data it has been given, basically combining the different content in what it thinks is logic. We needed a large amount of data, containing images, the so called data set. Run it through the algorithm, giving us the an insight in how it thinks and what it will give us back.

Datasets

Inspired by this algorithm, we wanted to explore its possibilities by giving it two very different datasets. We chose to run a dataset which contains a large amount of relatively similar images. Similar in the imagesize, content and quality, a 'clean' dataset. We came across a Oxford University dataset which contained 8000 images of flowers. The purpose of this dataset was scientific, so we knew it would not be polluted by images which were out of place.

Flowers

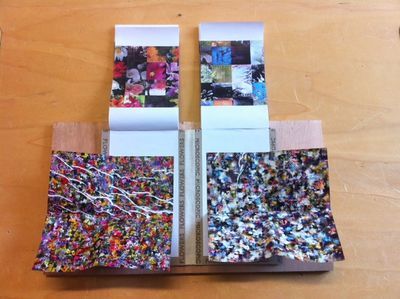

We gave the algorithm 8000 flower images. This is what it gave us back.

The image on the left are all the flowers combined in a small amount of frames. The original content from the dataset is still very clear. All the different image show the shapes, texture and colour from the original images. Though non of the flowers are existing, they are all shaped by the algorithm. The image on the right shows an other ability of the algorithm. Combining all generated images into one large blended image. Also in this image the original shapes can be seen clearly.

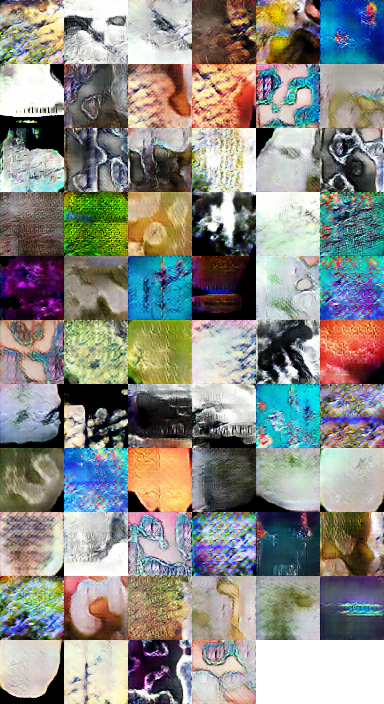

Microscopic

The first dataset gave us more or less what we have expected, seen the 'clean' set we have given the algorithm. For the second one we wanted to try the opposit. We wanted to run a 'dirty' dataset. An uncontrolled set in which all sorts of different images can be found. Then only communal thing would be its search term. We chose 'microscopic' because of the fact that most of the microscopic images we know, are already abstract and undefinable. Beside this fact, we generated this dataset from Instagram. So instead of a scientific dataset, we know used a public dataset. This set had less images as the first, which is an extra element in making it harder for the algorithm to understand were the content is about. And how it should understand and combine it to recreate a set of images.

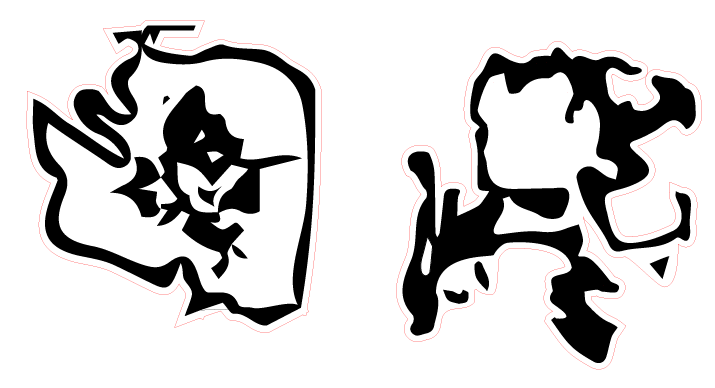

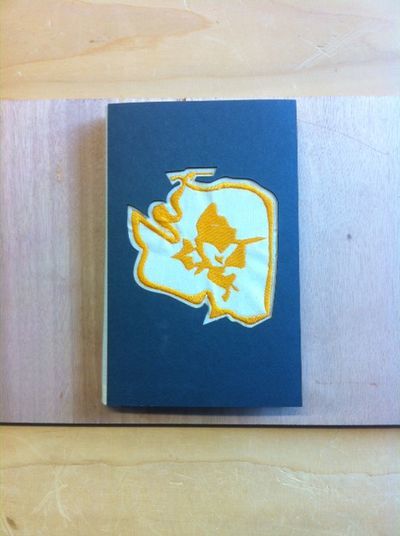

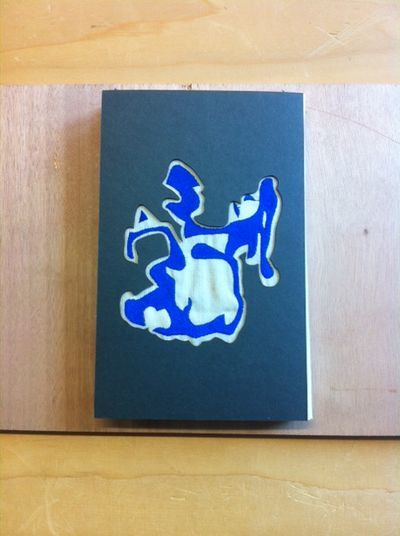

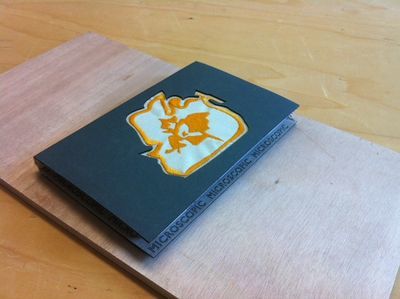

Booklet

The fact we worked with two datasets gave us the idea to split the book in half. Meaning we would combine the two seperate results as individual booklets sharing the same bookcover. We also decided we wanted to do something extra with the results the machine gave us. It gave us images, which can be converted to vectors and then be lasercut or put in the embroiderymachine. We decided to show these possibilties on the booklets cover.

Also the use of different colours had to make it more clear which dataset belonged to which cover. The blue and yellow threads made it more clear you were dealing with two different sets. Presented in a way you were able to compare the two and see how the machine deals with the differences between the two.

Digital Craft: Minor Cybernetics

Introduction

As a craftsman and future product designer, I am interested in production methods and material experimentation. The making part within a project is crucial, but meaningless without a strong concept and story to back it up. My main goal during this study Product Design is to strengthen my conceptual thinking and developing my storytelling abilities. I am under the impression that my making skills are at a significant level to express myself in a physical way. I know that this is not always the case looking at the idea behind the making.

The desire to develop myself as a designer and maker, made me choose this minor digital craft. Rethinking old crafts, exploring the new. Using the knowledge of the past and try to merge it with the possibilities of todays production methods. Not only focussing on the physical outcome, but also the impact of how we make and consume, in a political and social way.

I am interested in the future thinking part of this minor, with the focus on the relationship between man/machine and nature, within the field of production and makership. Looking at nature as the most efficient workshop (circulair, solar powered, etc.) and looking at the aspects of this wonderful workshop were I as designer can learn from and use in new production methods or new materials. I want to place myself in new, challenging situations, such as the post human era, to break free from the boundaries of the present, rethink the future and placing it back in the here and now. Aiming to create something of value, both in a conceptual as a physical form.

Project 1 Critical Making exercise

Reimagine an existing technology or platform using the provided sets of cards. In the first lesson of this project, our group used the cards provided by Shailoh to choose a theme, method and presentation technique. Picking the cards randomly gave us a combination of cards that spelled 'Make an object designed for a tree to use Youtube Comments and use the format of a company and a business model to present your idea'.

Group project realised by Sjoerd Legue, Tom Schouw, Emiel Gilijamse, Manou, Iris and Noemi Biro

Research

We used this randomly picked cards to set up our project and brainstorm about the potential theme. Almost immediately several ideas popped up in our mind, the one becoming the base of our concept being the writings of Peter Wohlleben, a German forester who is interested in the scientific side of trees communication with each other. The book he wrote, The Hidden Life of Trees became our main source of information. Basically what Wohlleben is researching is the connection between trees to trade nutrients and minerals in order for their fellow trees, mostly family, to survive. For example the fact that older, bigger trees send down nutrients to the smaller trees closer to the surface which have less ability to generate energy through photosynthesis.

Examples

Using their root network to send and receive nutrients. But not completely by themselves, the communication system, also known as the 'Wood Wide Web', is a symbiosis of the trees root network and the mycelium networks that grow between the roots, connecting one tree to another. The mycelium network, also known as Mycorysol, is responsible for the successful communication between trees.

Other scientists are also working on this subject. Suzanne Simard from Canada is also researching the communicative networks in forests. She is mapping the communication taking place between natural forests. Proving the nurturing abilities of trees, working together to create a sustainable living environment. A network where the so-called 'mother-trees' take extra care for their offspring but also other species, by sharing her nutrition for those in need.

Artist, scientists and designers are also intrigued by this phenomenon. For example Barbara Mazzolai from the University in Pisa has had her work published in the book 'Biomimicry for Designers" by Veronika Kapsali. She developed a robot inspired by the communicative abilities of trees and mimicking their movements in the search for nutrition in the soil.

Bios pot

The idea of presenting this project within a business model introduced us the company Bios. A Californian based company which produces biodegradable urn which can become a tree after planting the urn in the soil. We wanted to use this concept and embed this in our project. The promotional video could provide us with interesting video material for our own video. Besides the material, we were inspired by their application that was part of their product.

Concept

At first we wanted to give the tree a voice with giving it the ability to post likes using its youtube account. Where in- and output would take place in the same root network. But is a tree able to receive information a we can? And if so, what will it do with it? We wanted to stay in touch with the scientific evidence of the talking trees and decided to focus on an other application within the field of human-tree communication.

An other desire of our team was the fact that we wanted to present a consumer product taking the role as a company trying to sell our product for the global market. After researching other products concerning plants and trees, we found the biodegradable Bios urn. An urn which use as a pot to plant a tree of plant, which can later can be buried in the ground somewhere. This product inspired us to use this example as our physical part of the project.

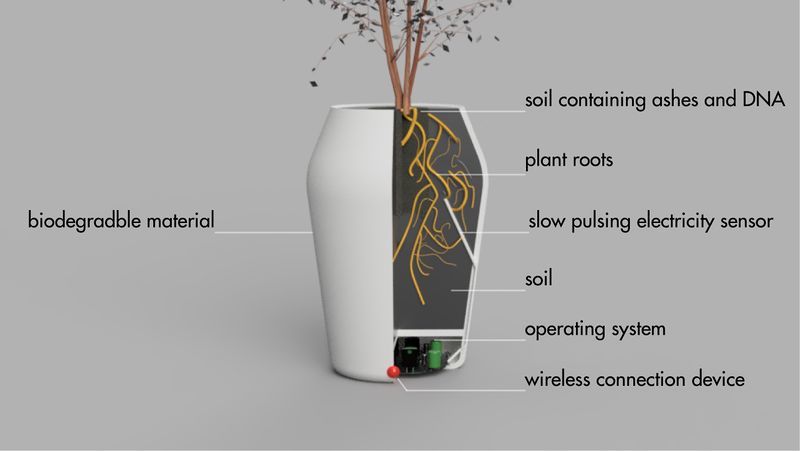

We thought about a new funeral methode using our own vase, the Treeincarnate urn, to collect the ashes of a deceased person in which it can be buried in the soil. Before the vase is buried, the seed of a tree will be planted together with the ashes and a tiny bit of the deceased persons DNA. Overtime the tree will consume the remains and will show characteristics belonging to the deceased person. The tree and the deceased human will share their DNA and become one. Through the root systems of the tree, it, together with the human part, can communicate with world of the living by releasing nutritions, just like in nature, but now with the intervention of the human part.

We wanted to construct a smart vase which had the technical ability to sense the chemical secretion from the roots and convert these to a positive or negative output. The input would also take place using the build-in sensors in the vase using a wireless internet connection. An important part of the vase would be the application belonging to the vase in which the user can share his data with the tree and will receive a reply from the tree.

The online app comes with the vase and makes sure the relative tof the deceased person can stay in touch with the deceased, only now in the form of a tree. Although time is experienced in a different way between human and tree, the tree should be able te give a reaction on the data the relative within 33 days. The communication will keep going slow, but you will never have to say goodbye to your loved ones.

Process

Result

As the final result, we gave a business to business presentation consisting of a visual presentation together with a physical prototype. The presentation text is shown below.

Did you know that trees are sensitive and considerate creatures that communicate with each other enable to help them grow stronger as a unity? Trees and forests are closely connected through complex root systems, also called the “Wood Wide Web”. This intricate web allows trees to send chemical, hormonal and slow pulsing electrical signals to support other trees in need and to report about a possible threat.

But now we found out even more; there is a substantial body of scientific evidence which shows that by planting a DNA sample and ashes of a human body with a seed of a tree, the tree begins draw characteristics from the ashes as it nourishes itself with the soil. During the first 33 days after planting, the roots begin to take in the ashes, changing its way of communicating towards a more humanlike way. It takes its environment more into consideration and becomes more eager for interactions with others. This means that the tree will have the same opinions and emotions as the person that is now mixed with the soil below the tree.

Undoubtedly this is a scientific miracle, but we dare to take this research even further. What triggered our minds was, that, these DNA-manipulated trees were only in communication through the “Wood Wide Web” between their own species and not directly to us, humans. Therefore we started to develop a device to not only translate those underground signals to a more human like language but to also send messages to the roots. After doing years of scientific research we can now say; we have a connection to what people might call the after life. With the help of this device we can talk to our loved ones through trees.

This biodegradable pot has small device implemented in it transferring slow pulsing electrical signals in and out through the root system of the tree. This sensor allows you to send video and image messages to your deceased loved ones to which they are able to respond converting root signals into readable messages.

Here’s a little insight on how it works

Not only does this new technology allow you to stay connected with the deceased but it will also provide you and your family a clear and healthy atmosphere to live in. As the carbon dioxide emissions are constantly rising it is more important than ever that we take care of our nature and atmosphere. Through Oxygenic photosynthesis in which carbohydrates are synthesised from carbon dioxide, water and oxygen is produced as a byproduct. Freshly released oxygen will improve the quality of your life notably.

Due to the great post war baby boom we believe in good market for this product. We will launch our first ready-to-order products in Europe, Asia, Australia and North America in the beginning of 2019.

We are happy to announce you that due to our thorough and long research you will never have to say goodbye to your loved one’s. The person will live on through the tree.

Project 2 _ Cybernetic Prosthetics

In small groups, you will present a cluster of self-directed works as a prototype of a new relationships between a biological organism- and a machine, relating to our explorations on reimagining technology in the posthuman age. The prototypes should be materialized in 3D form, and simulate interactive feedback loops that generate emergent forms.

Group project realised by Sjoerd Legue, Tom Schouw, Emiel Gilijamse and Noemi Biro

Inspiration

We started this project with a big brainstorm around different human senses. Looking at researches and recent publications, this article from the guardian << No hugging: are we living through a crisis of touch? >> raised the question of touching in our current state of society. What we found intriguing about this sense is how it is becoming more and more repressed to the technological interfaces of our daily life. It is becoming a taboo to touch a stranger but it is considered normal to walk around the streets holding our idle phone. Institutions are also putting regulations on what is considered appropriate contact between professionals and patients. For example, if a reaction to a bad news used to be a hug, nowadays it is more likely to pat somebody on the shoulders than to have such a large area connection with each other.

Based on the above-mentioned article we started to search for more scientific research and projects around touch and technological surfaces to gain insight into how we treat our closest gadgets.

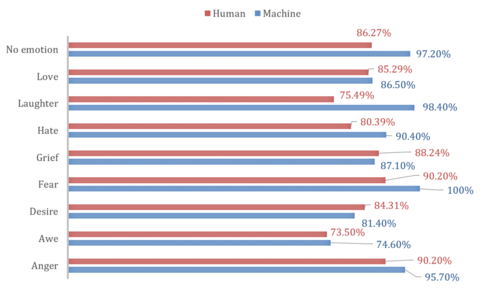

This recent article from Forbes magazine relates the research of Alicia Heraz from the Brain Mining Lab in Montreal << This AI Can Recognize Anger, Awe, Desire, Fear, Hate, Grief, Love ... By How You Touch Your Phone >> who trained an algorithm to recognize humans emotional pattern from the way we touch our phone. In her official research document << Recognition of Emotions Conveyed by Touch Through Force-Sensitive Screens >> Alicia reaches the conclusion:

- "Emotions modulate our touches on force-sensitive screens, and humans have a natural ability to recognize other people’s emotions by watching prerecorded videos of their expressive touches. Machines can learn the same emotion recognition ability and do better than humans if they are allowed to continue learning on new data. It is possible to enable force-sensitive screens to recognize users’ emotions and share this emotional insight with users, increasing users’ emotional awareness and allowing researchers to design better technologies for well-being."

We looked at current artificial intelligence models trained on senses and we recognized the pattern that Alicia also mentioned: there is not enough focus on touch. Most of the emotional processing focuses on facial expressions through computer vision. There is an interesting distinction about how private we are about somebody touching our faces but the same body part has become a public domain through security cameras and shared pictures.

With further research into the state of current artificial intelligence on the market and in our surroundings, this quote from the documentary << More Human than Human >> captured our attention

- " We need to make it as human as possible "

Looking into the future of AI technology the documentary imagines a world where in order for human and machine to coexist they need to evolve together under the values of compassion and equality. We, humans, are receptive to our surroundings by touch thus we started to imagine how we could introduce AI into this circle to make the first step towards equality. Even though the project is about the extension of AI on an emotional level we recognized this attempt as a humanity-saving mission. Once AI is capable of autonomous thoughts and it can collect all the information from the internet our superiority as a species is being questioned and many specialists even argue that it will be overthrown. That is why it is essential to think of this new relationship in terms of equality and feed our empathetical information into the robots so they can function under the same ethical codes as we do.

Objective

From this premise, we first started to think of a first AID kit for robots from where they could learn about our gestures towards each other expressing different emotions. The best manifestation of this kit we saw as an ever-growing database which by traveling around the world could categorize not only the emotion deducted from the touch but also a cultural background linked to geographical location.

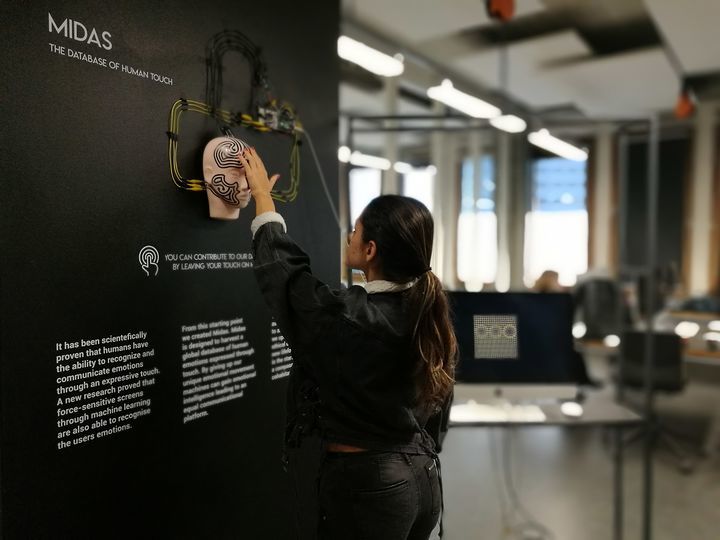

For the first prototype, our objective was to realize a working interface where we could make the process of gathering data feel natural and give real-time feedback to the contributor.

Material Research

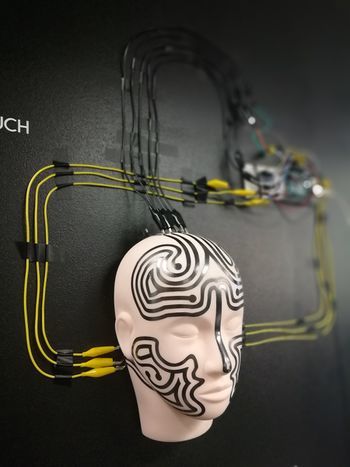

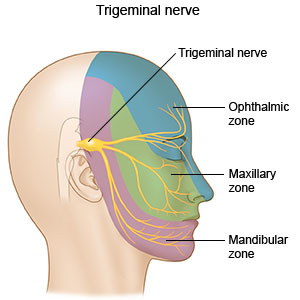

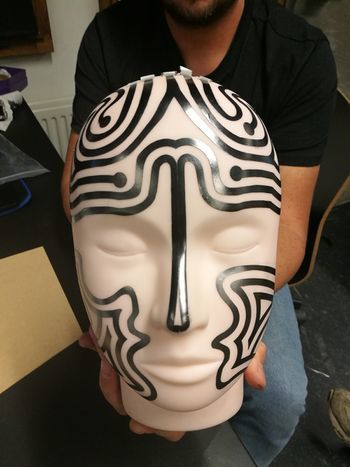

We decided to focus on the human head as a base for our data collection because, on one hand, it is an intimate surface for touch with an assumption for truthful connection, on the other hand, the nervous system of the face can be a base for the visual circuit reacting to the touch.

The first idea was to buy a mannequin head and cast it ourselves from a softer more skin-like material that has a soft memory foam aspect. Searching on the internet and in stores for a base for the cast was already asking so much time from us that we decided on the alternative of searching for the mannequin head in the right material. We found such a head in the makeup industry, used for practicing makeup and eyelash extensions.

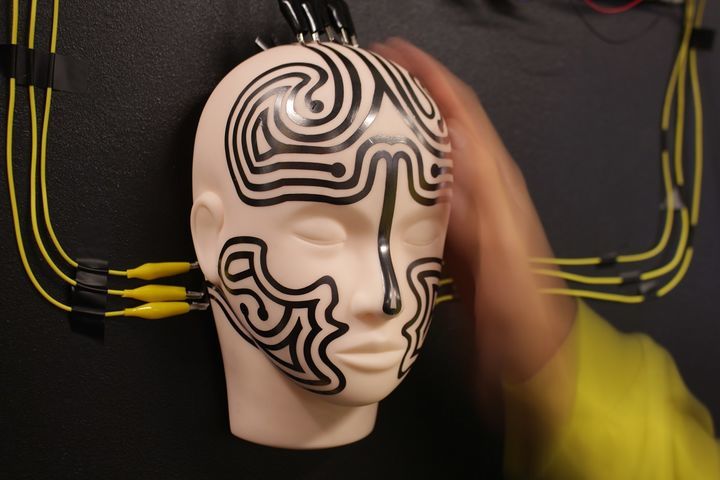

Once we had the head we could get on to experiment with the circuits to be used on the head not only as conductors of touch but also as the visual center points of the project.

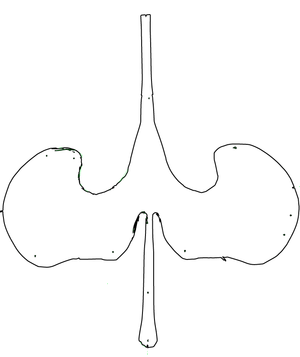

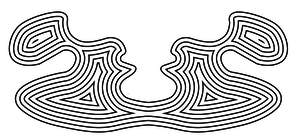

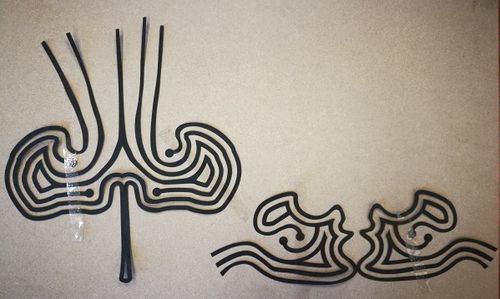

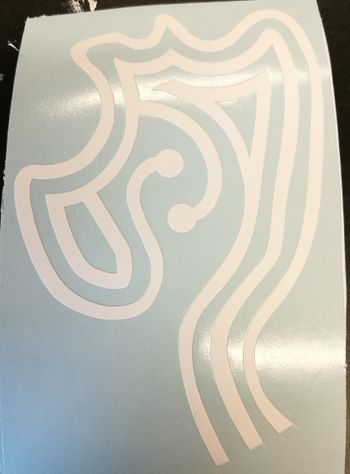

First based on the nervous system we divided the face into forehead and cheeks as different mapping sites. Then with a white paper, we looked at the curves and what the optimal shape on the face looks like folded out. From a rough outline of the shape, we worked toward a smooth outline and then we used offset to get concentric lines inside the shape.

To get to the final circuit shapes we decided on the connection points with the crocodile clips to be on the end of the head and underneath the ears. With 5 touchpoints on the forehead and 3 on each side of the face, the design followed the original concentric sketch but added open endings in form of dots to the face.

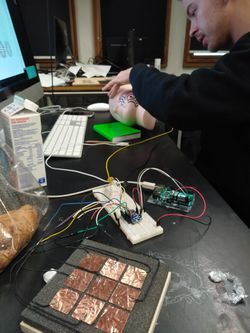

Not only the design was challenging for the circuits but also the use of the material. For the first technical prototype in which we used a grid of 3x3 to test the capacitive sensor, we used copper tape. Although this would have been the best material to use in terms of conduciveness and instead sticking surface the price for copper sheets big enough for our designs exceeded our budget and using copper tape would have meant assembling the circuit from multiple parts. The alternative material was gold ($$$$), aluminum ($) or graphite ($). Luckily Tom had two cans of graphite spray and we tried it on paper and it worked. We tested it with an LED - it is blinking by the way.

We cut the designs out with a plotter from a matte white foil and then sprayed the designs with the graphite spray. After we read into how to make the graphite a more efficient conductor we tried the tip to rub the surface with a cloth or cotton buns. The result was a shiny metalic surface that added even more character to the visual of the mannequin head.

Technical Components

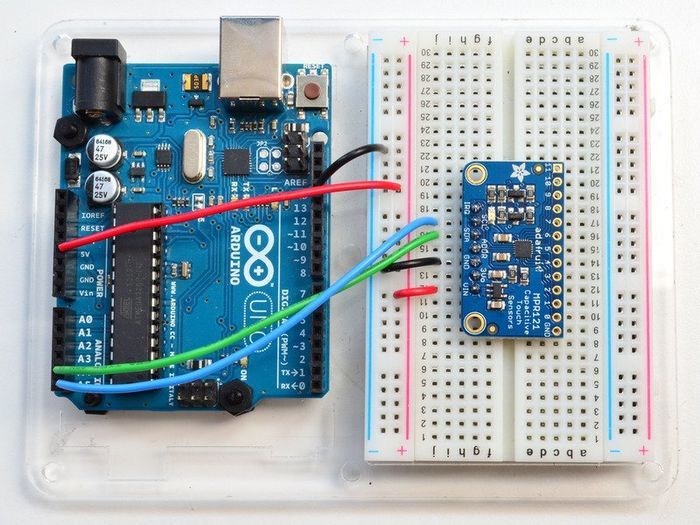

Adafruit MPR121 12-Key Capacitive Touch Sensor

Technical Aspects

For the technical working for the prototype, we used an Arduino Uno and the MPR121 Breakout to measure the capacitive values of the graphite strips. The Arduino was solely the interpreter of the sensor and the intermediate chip to talk to the visualisation we made for Processing. To make this work, we rebuilt the library that was provided on the Adafruit Github (link to their Github) to be able to calibrate the baseline-values ourselves and lock them. We also built-in a communicative system for Processing to understand. These serial messages could then be decoded by Processing and be displayed on the screen (as shown in the 'Exhibition' tab).

To give a proper look into the programs that we wrote, we want to publish our Arduino Capacitive Touch Interpreter and the auto-connecting Processing script to visualise the values the MPR121 sensor provides.

Files

Media:Rippleecho_for_cts.zip (bestandgrootte: 485Kb)

Media:Capacitive-touch-skin.ino.zip (bestandsgrootte: 3Kb)

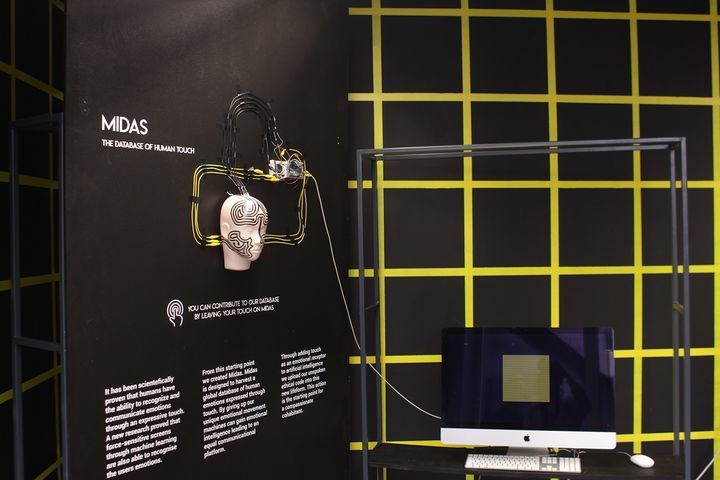

Exhibition

It has been scientifically proven that humans have the ability to recognize and communicate emotions through an expressive touch. A new research proved that force-sensitive screens through machine learning are also able to recognize the users` emotions.

From this starting point, we created Midas.

Midas is designed to harvest a global database of human emotions expressed through touch. By giving up our unique emotional movement machines can gain emotional intelligence leading to an equal communicational platform.

Through adding touch as an emotional receptor to Artificial Intelligence we upload our unspoken ethical code into this new lifeform. This action is the starting point for a compassionate cohabitant.

Project 3_ Wind Wacom

Introduction

From Devices to systems: sensors and sensitivity training. You will open the 'black box' of a technical device in an anatomical machine learning lesson, and you will also dissect and analyse a concrete instance of a complex system at work. How do devices and networked systems interact? Document and research all of the parts, how they work, where they come from. Redesign and add new circuits. Put it back together with a new function and added sensor feedback loops. The basic electronics should be fully functional.

For this individual project we needed to open a so called 'Black Box'. In this case referring to an electrical device which does not show how the object acctually functions, the different components that make the device function are concealed in a housing of some sort. Taking apart the device is neccesary to discover the functioning of the machine and to get a better understanding of the different techniques involved and how the different components are made and assembled. An extra feature in this assignment was to reassemble the device in such a way it would give the device a different input, output or feedback.

I chose an old Wacom drawing pad for this assignment. I have never used it properly (I prefer using a mouse), and the pen, the most valuable part of the set, broke a while ago. Besides the fact I never really used the device, I was always intrigued by the way it works. When the pen is moved above the centre square of the bed of the Wacom, the cursor follows the movement you make. Making you able to digitally draw, select of other applications that could be done using a conventional mouse. I never really knew how these two devices worked and saw potential in using it for new applications.

Research

I started to take the device apart and documenting the different components to get a better understanding of this device.

Basically we can see the device concists two different devices. The pad, which can be connected to the laptop or computer using an USB cable, and the pen to navigate on the pad. I will shortly comment the findings focussed on these two seperate devices in their 'naked' form, meaning without the housing, only the electronic, working parts.

Pad

This PCB (Printed Circuit Board) defines the surface you are able to use to navigate on your computer screen. The software driver which can be downloaded, enables you to define that surface of the PCB to your computer screen. You can set the pad to cover your whole screen or partially. If you take a closer look at the board itself, you are able to determine vertical and horizontal lines in the centre of the device. These lines are functioning as x- and y-axis. X-axis on one side, the Y-axis on the other, both resulting as an electro magnetic field which is constantly switched on when connected to the computer. This information was obtained online, searching for 'how does a wacom work' (https://www.quora.com/Where-does-the-power-of-the-Wacom-pen-come-from). But how it exacly works was not yet clear to me and I asked a former 'Marconist' (wireless operator) to shine his light on the device. He quickly noticed the 'Crystal Oscillator' (A tuned electronic circuit used to generate a continuous output waveform) and the 6.000 MHZ printed right above it. Therefore he concluded this PCB functions as a transmitter, transmitting a frequence of 6.000 MHZ, resulting in a magnetic field around the PCB. When taking the device apart, I found a thin steel plate which protected the bottom of the pad so other magnetic sources will not influence the device. The magnetic field can now only excists on the top of the PCB.

Pen

The pen consists of a plastic tip connected to a pressure sensetive switch to be able to click, functioning as a left-click in the original set up. The two buttons above the tip are used as right-click and scroll. Just above the plastic tip you can see a copper wire spool. This spool will amplify energy provided by the pad. When the spool gets close enough to the pad, it will enter the magnetic field and basically short circuit the x- and y-axis on the pad. Like so the pad is able to know and send the position of the pen to the compute which allows you to navigate on your computer screen. The pad is also able to recieve information like right- left-click an scrolling trought the magnetic field.

Having obtained this information about how the device worked, I tried to interfere the magnetic field. I knew it is transmitting a frequence of 6.000 MHZ, so I wanted to find a kind of reaction between the pad and an other kind of device. I tried to put a high frequency device (electronic musquito catcher) close to the pad, but I would have no result. After taking to the wireless operator again, he told me the frequency of 6.000 MHZ was high and difficult to play aroudn with. After giving me some interesting sites which tell you everything about frequencies, I knew I had to think of something else if I wanted to finish this week-project. The world of radio frequencies is new for me and therefore extremely complex, Ik knew I could not unravel the basics within the week. I started to focus not on what I could not achieve but what I could.

If I could not change the device itself, I could definitly change the use of the device. The device is designed to a human being to control in this case his or her movements on a computer. It has a pen, which is generaly known to right text and create drawings of all kinds. Almost every human being know how to use it, hold it you hand and move the device in order to create shapes or words. The pen being a extension of the finger in order to communicate through shape and text.

Using this idea, I wanted to change the Wacom system in a way I could be used by an other entity than the human being. How would the Wacom look if I wanted it to be controlled by something else than the hands of a human. I began to research other entities which could control this device. A thing that popped up in my mind were the antique marine navigation devices used before our satelite navigation. Mysterious machines, appearing impossible to understand for a person not involved in working within the nautical field. Devices designed to use forces and/or appearances of nature to navigate when at sea. I wanted to use the wacom to control the uncontrollable, the wind.

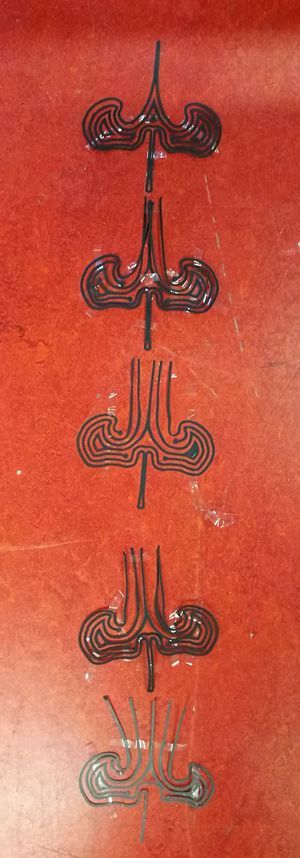

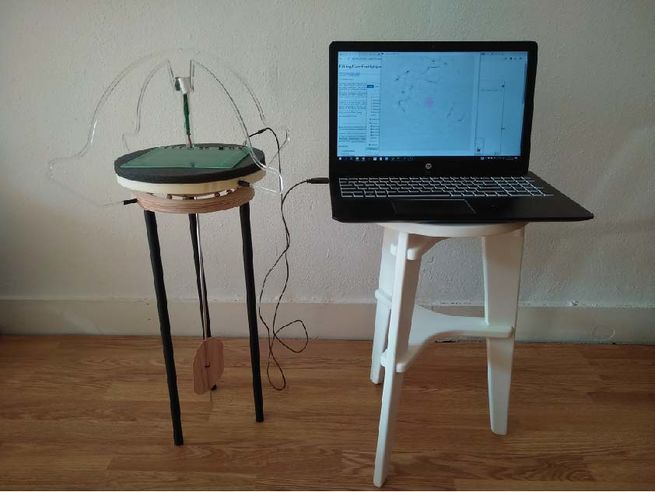

With these instruments a aa an inspiration for my device, I started to contruct my Wacom pad with a hands on approach. I felt that because of the time available for the realisation of the project, I needed to physically build my device step by step. The first sketches can be seen below.

Realisation

physical

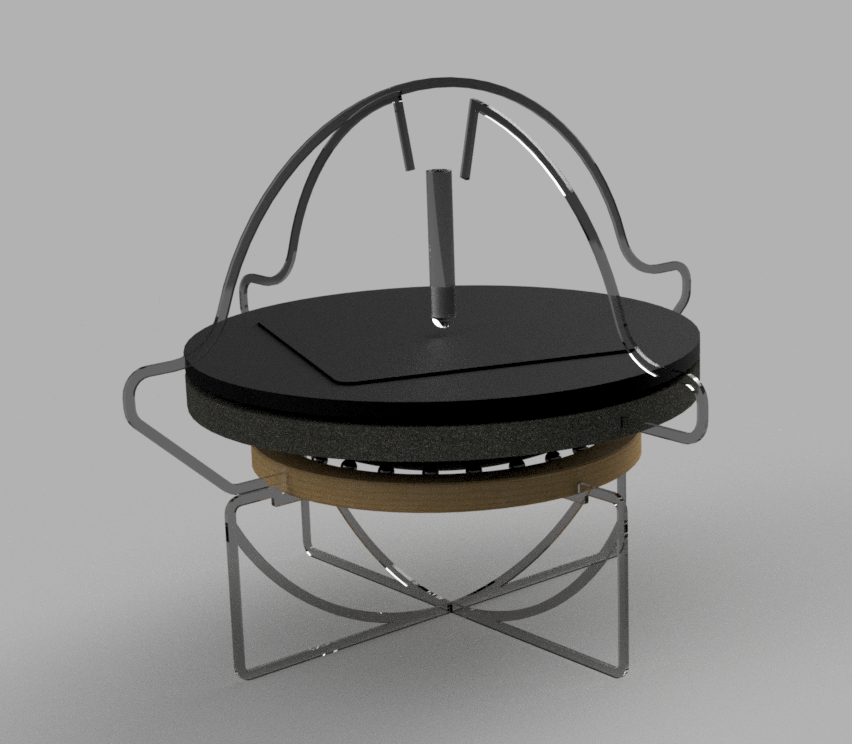

After a quick and dirty plan on paper I started to make my model according to my 3d-model I have made to figure out the best way to move the wacom using the wind and make it look visually attractive. The visual attraction needed to refer to the nautical instruments, the device has to appear like a complicated device which not tells you exactly how it works. This to encourage the viewer to find out for him/herself what they are looking at and trying to figure out how it works, to explore what the viewer sees.

The finished product showes an instrument that will use the wind, or breeze, to control the wacom. The picture on your left-hand side shows the different components and their function.

1: these aluminium contact points control the left and right click of the pen. The points are connected to part 5 which can be moved by moving part 7. The wind can move part 7 back and forth and by doing so clicking left or right.

2: the pen itself, placed in a testtube to show the seerate components. The pen hangs on threads so it can also be moved by the air. The top part of the test tube is provided by two sets of wire attached to the switch on the pen. When one of the two sets hits the aluminium contact points, the circiut is closed and the wind can click.

3: the wacom pad

4: this foam part floats on part 5. This way the wacom pad is able to move all directions. The wind can control the x- and y-axis moving this foam part around.

5: the yellow model foam part has a bowl shape on the bottom and a bucket shape at the top. The bottom is able to move and the bearing balls of part 6 and contains water to make part 4 able to float and move.

6: the wooden ring has a groove were steel bearing balls are placed. By moving part 7, part 5 will be able to move, so will the contact points near the pen.

7: this wind catcher can move different ways, but is mostly focust on moving back and forth in order to control right and left click at the top.

The materials I chose were of great importance for the well functioning of the device. I used as many light weight materials as possible to increase the movements of the instrument caused by the wind. I wanted to keep the seperate parts as transparant as possible, so chose transparant acrylic to keep an open structure. The top part were the wacom pad was placed on, needed to float, so I added Styrene floaters. These seemed to be too heavy, so I moved to a plastic petri-dish placed upside down to make the pad float.

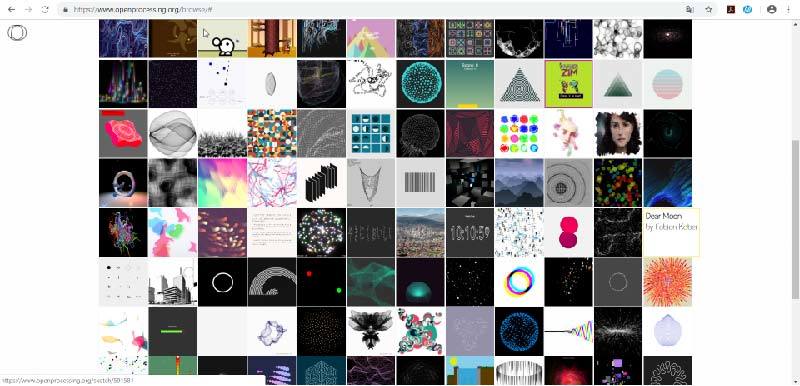

Digital

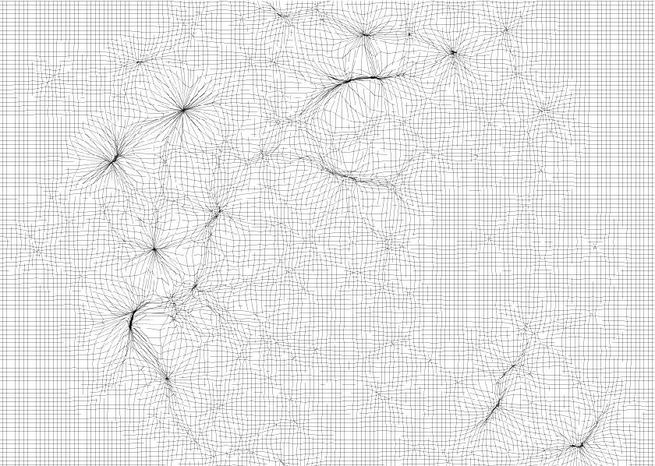

Now that I created a device made for the wind to control, I needed a sort of output. A way to document the movements of the wind and translate them in a different form. The previous project I had worked on, the MIDAS-project, also contained output in the form of a device as input and a digital, visual output. Altough my focus was more on the device itself, especially concidering the time that was available, I knew an output was necessary to bring the project to a higher level. I looked on the site OpenProcessing for readymade codes that could provide me with a suitable visual expression of my co-illustrator using my device.

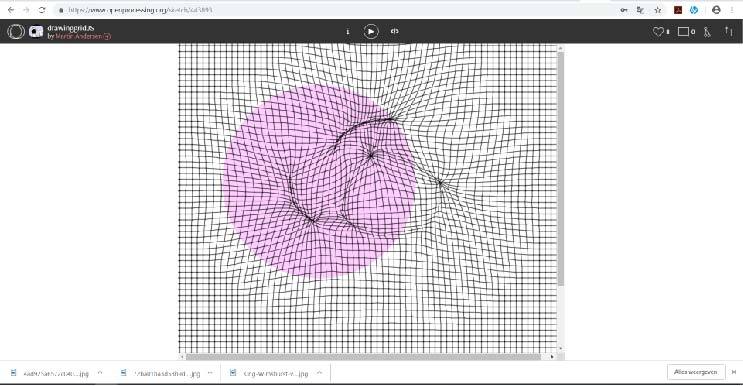

I started to make a selection for my project, my focus was on a code which could communicate the fact the wind controlled a x- and y-axis. Therfore a visual presentation with a grid was desired. I found the drawinggridJS, made by Martin Anderson, an amazing code which allows you to create a drawing by narrowing the space between the lines in the grid when clicking right. You can increase the space by clicking left.

In order to get an interesting result, I had to change the code myself. I changed the grid, the size of the cursor and the option to get the programme on full screen. My ajusted code can be found below.

Media:DrawinggridJSWACOM.zip (bestandgrootte: 485Kb)

Result

Reflection

Looking back at this week project, I feel there is more to explore what this topic is concerned. Using the wind to create shapes caught my interest but needs futher research and development. The concept is not strong enough, it needs more depth. Questions like, what is wind, what does wind mean for us, if the wind could speak, what would it tell us. Long story short, why use the wind and what is the best way to use it. Beside the research part I want to improve my device on several different levels. I want it to be more sensetive, to be able to move easier and more accurate, in other words I want to improve the technical aspects of the device. An other aspect would be the place in space were the device will be placed, as wel as the size of it. Will it be a public art piece or a desktop model for inhouse use.

Digital Craft Essay My Craft

Visual Mapping

Media:Owning_the_Weather_by_2025_Visual_mapping.pdf

Timeline Minor Projects

Media:Capacitive-touch-skin.ino.zip (bestandsgrootte: 3Kb)

Final Research Document

Media:Research_Document_Minor(english)(1-27).pdf Media:Research_Document_Minor(english)(28-55).pdf